The Intel SSD DC P4510 SSD Review Part 1: Virtual RAID On CPU (VROC) Scalability

by Billy Tallis on February 15, 2018 3:00 PM EST- Posted in

- SSDs

- Storage

- Intel

- RAID

- Enterprise SSDs

- NVMe

- U.2

- Purley

- Skylake-SP

- VROC

Test System

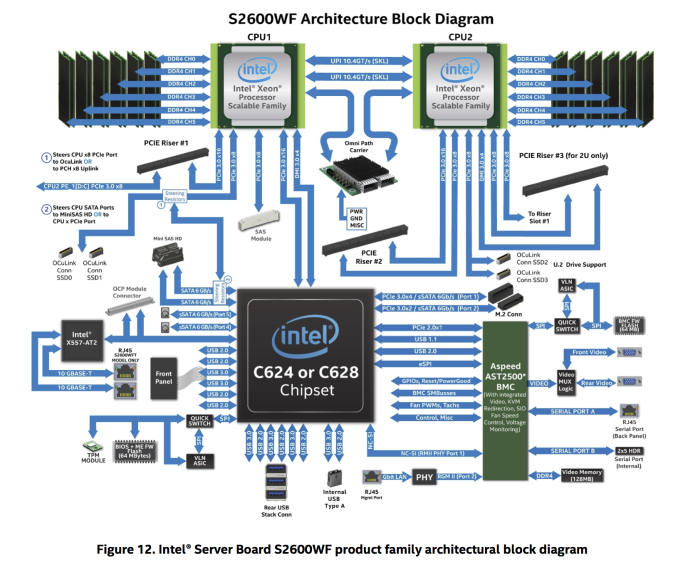

For this review, we're using the same system Intel provided for our Optane SSD DC P4800X review. This is a 2U server based on Intel's current Xeon Scalable platform codenamed Purley. The system includes two Xeon Gold 6154 18-core Skylake-SP processors, and 16GB DDR4-2666 DIMMs on all twelve memory channels for a total of 192GB of DRAM.

Each of the two processors provides 48 PCI Express lanes plus a four-lane DMI link. The allocation of these lanes is complicated. Most of the PCIe lanes from CPU1 are dedicated to specific purposes: the x4 DMI plus another x16 link go to the C624 chipset, and there's an x8 link to a connector for an optional SAS controller. This leaves CPU2 providing the PCIe lanes for most of the expansion slots.

| Enterprise SSD Test System | |

| System Model | Intel Server R2208WFTZS |

| CPU | 2x Intel Xeon Gold 6154 (18C, 3.0GHz) |

| Motherboard | Intel S2600WFT |

| Chipset | Intel C624 |

| Memory | 192GB total, Micron DDR4-2666 16GB modules |

| Software | CentOS Linux 7.4, kernel 3.10.0-693.17.1 fio version 3.3 |

| Thanks to StarTech for providing a RK2236BKF 22U rack cabinet. | |

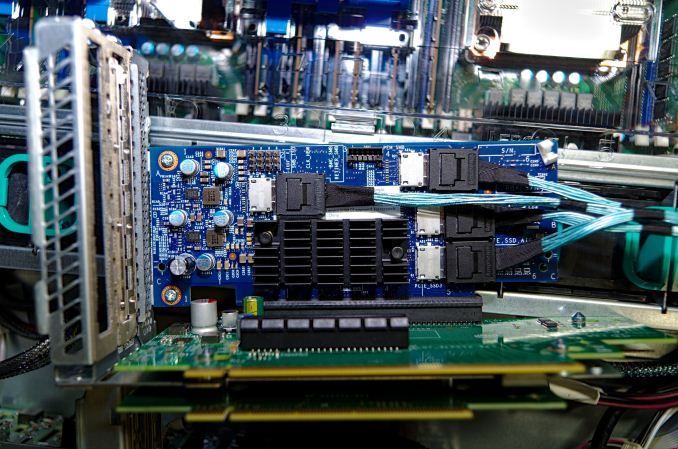

As originally configured, this server was set up to provide as many PCIe slots as possible: 7 x8 slots and one x4 slot. To support U.2 PCIe SSDs in the drive cages at the front of the server, a PCIe switch card was included that uses a Microsemi PM8533 switch to provide eight OCuLink connectors for PCIe x4 cables. This card only has a PCIe x8 uplink, so it is a potential bottleneck for arrays of more than two U.2 SSDs. Without such cards to multiply PCIe lanes, providing PCIe connectivity to all 16 hot swap bays would require almost all of the lanes routed to PCIe expansion card slots. The Xeon Scalable processors provide a lot of PCIe lanes, but with NVMe SSDs it is still easy to run out.

In order to provide a dedicated x4 link to each of our P4510 SSDs, a few components had to be swapped out. First, one of the riser cards providing three x8 slots was exchanged for a riser with one x16 slot and one x8 slot. The PCIe switch card was exchanged for a PCIe x16 retimer card with four OCuLink ports. A PCIe retimer is essentially a pair of back to back PCIe PHYs; its purpose is to reconstruct and retransmit the PCIe signals, ensuring that the signal remains clean enough for full-speed operation over the meter-long path from the CPU to the SSD, passing through six connectors, four circuit boards and 70cm of cable.

When using a riser with a PCIe x16 slot, this server supports configurable lane bifurcation on that slot, so that it can operate as a combination of x4 or x8 links instead. PCIe slot bifurcation support is required to use a single slot to drive four SSDs without an intervening PCIe switch. Bifurcation is generally not supported on consumer platforms, but enthusiast consumer platforms like Skylake-X and AMD's Threadripper are starting to support it—but not on every motherboard. In enthusiast systems, it is expected that consumers will be using M.2 SSDs rather than 2.5" U.2 drives, so several vendors are now selling quad-M.2 adapter cards. In a future review, we will be testing some of those adapters with this system running our client SSD test suite.

The x16 riser and retimer board arrived a few days after the P4510 SSDs, so we have some results from testing four-drive RAID with the PCIe switch card and its x8 uplink bottleneck. Testing of four-drive RAID without the bottleneck is ongoing.

Setting Up VROC

Intel's Virtual RAID on CPU (VROC) feature is a software RAID system that allows for bootable NVMe RAID arrays. There are several restrictions: most notable is the requirement of a hardware dongle to unlock some tiers of VROC functionality. Most Skylake-SP and Skylake-X systems have the small header on the motherboard for installing a VROC key, but the keys themselves have not been possible to buy directly. When first announced, Intel gave the impression that VROC would be available to enthusiast consumers who were willing to pay a few hundred dollars extra. Since then, Intel has retreated to the position of treating VROC as a workstation and server feature that is bundled with OEM-built systems. Intel isn't actively trying to prevent consumers from using VROC on X299 motherboards, but until VROC keys can be purchased at retail, not much can be done.

There are multiple VROC key SKUs, enabling different feature levels. Intel's information about these has been inconsistent. We have a VROC Premium key, which enables everything, including the creation of RAID-5 arrays and the use of non-Intel SSDs. There is also a VROC standard key that does not enable RAID-5, but RAID-0/1/10 are available. More recent documents from Intel also list a VROC Intel SSD Only key, which enables RAID-0/1/10 and RAID-5, but only supports Intel's data center and professional SSD models. Without a VROC hardware key, Intel's RAID solution cannot be used, but some of the underlying features VROC relies on are still available:

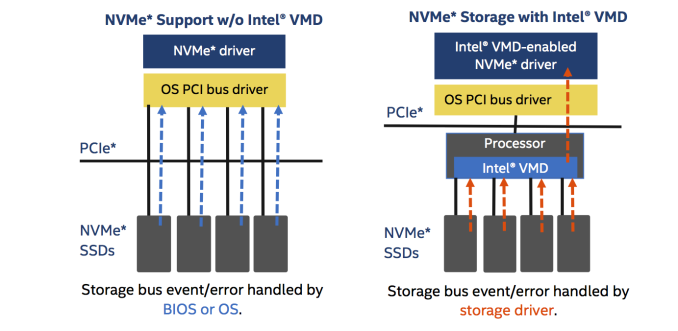

Intel Volume Management Device

When 2.5" U.2 NVMe SSDs first started showing up in datacenters, they revealed a widespread immaturity of platform support for features like PCIe hotplug. Proper hotplug and hot-swap of PCIe SSDs required careful coordination between the OS and the motherboard firmware, often through vendor-specific mechanisms. Intel tackled that problem with the Volume Management Device (VMD) feature in Skylake-SP CPUs. Enabling VMD any of the CPU's PCIe ports prevents the motherboard from detecting devices attached to that port at boot time. This shifts all responsibility for device enumeration and management to the OS. With a VMD-aware OS and NVMe driver, SSDs and PCIe switches connected through ports with VMD enabled will be enumerated in an entirely separate PCIe domain, appearing behind a virtual PCIe root complex.

The other major feature of VMD sounds trivial, but is extremely important for datacenters: LED management. 2.5" drives do not have their own status LEDs and instead rely on the hot-swap backplane to implement the indicators necessary to identify activity or a failed device. In the SAS/SCSI world the side channels and protocols for managing this are thoroughly standardized and taken for granted, but the NVMe specifications didn't start addressing this until the addition of the NVMe Management Interface in late 2015. Intel VMD allows systems to use a VMD-specific LED management driver to provide the same standard LED diagnostic blink patterns that a SAS RAID card uses.

PCIe Port Bifurcation

On our test system, PCIe port bifurcation is automatically enabled for x16 slots, but not for x8 slots. No bifurcation settings had to be changed to get VROC working with the four-port PCIe retimer board, but when installing an ASUS Hyper M.2 X16 card with four Samsung 960 PROs, the slot's bifurcation setting had to be manually configured to split the port into four x4 links. This behavior is likely to vary between systems.

Configuring the Array

Once a VROC key is installed and SSDs are installed in VMD-enabled PCIe ports, the motherboard firmware component of VROC can be used. This is a UEFI driver that implements software RAID functionality, plus a configuration utility for creating and managing arrays. On our test system, this firmware utility can be found in the section for UEFI Option ROMs, right below the settings for configuring network booting.

Once an array has been setup, it can be used by any OS that has VROC drivers. All the necessary components are available out of the box from many Linux distributions, so we were able to use CentOS 7.4 without installing any extra software. (Intel provides some extra utilities for management under Linux, but they aren't necessary if you use the UEFI utility to create arrays.) On Windows, Intel's VROC drivers need to be installed, or loaded while running the Windows Setup if the operating system will be booting from a VROC array.

Configurations Tested

With four 2TB Intel SSD P4510 drives at our disposal and one 8TB drive to compare against, this review includes results from the following VROC configurations:

- Four 2TB P4510s in RAID-0

- Four 2TB P4510s in RAID-10

- Two 2TB P4510s in RAID-0

- A Single 2TB P4510

- A Single 8TB P4510

There are also partial results from four-drive RAID-0, RAID-10 and RAID-5 from before the x16 riser and retimer board arrived. These configurations had all four 2TB drives connected through a PCIe switch with a PCIe x8 uplink. Testing of four drives in RAID-5 without this bottleneck is in progress.

21 Comments

View All Comments

MrSpadge - Friday, February 16, 2018 - link

> On a separate note - the notion of paying Intel extra $$$ just to enable functions you've already purchased (by virtue of them being embedded on the motherboard and the CPU) - I just can't get around it appearing as nothing but a giant ripoff.We take it for granted that any hardware features are exposed to us via free software. However, by that argument one wouldn't need to pay for any software, as the hardware to enable it (i.e. a x86 CPU) is already there and purchased (albiet probably from a different vendor).

And on the other hand: it's apparently OK for Intel and the others to sell the same piece of silicon at different speed grades and configurations for different prices. Here you could also argue that "the hardware is already there" (assuming no defects, as is often the case).

I agree on the anti trust issue of cheaper prices for Intel drives.

boeush - Friday, February 16, 2018 - link

My point is that when you buy these CPUs and motherboards, you automatically pay for the sunk R&D and production costs of VROC integration - it's included in the price of the hardware. It has to be - if VROC I is dud and nobody actually opts for it, Intel has to be sure to recoup its costs regardless.That means you've already paid for VROC once - but you now have to pay twice yo actually use it!

Moreover, the extra complexity involved with this hardware key-based scheme implies that the feature is necessarily more costly (in terms of sunk R&D as well as BOM) than it could have been otherwise. It's like Intel deliberately and intentionally set out to gouge its customers from the early concept stage onward. Very bad optics...

nivedita - Monday, February 19, 2018 - link

Why would you be happier if they actually took the trouble to remove the silicon from your cpu?levizx - Friday, February 16, 2018 - link

> However, by that argument one wouldn't need to pay for any software, as the hardware to enable itThat's a ridiculous claim, the same vendor (SoC vendor, Intel in this case) does NOT produce "any software" (MSFT etc). VROC technology in ALREADY embedded in the hardware/firmware.

BenJeremy - Friday, February 16, 2018 - link

Unless things have changed in the last 3 months, VROC is all but useless unless you stick with intel-branded storage options. My BIL bought a fancy new Gigabyte Aorus Gaming 7 X299 motherboard when they came out, then waited months to finally get a VROC key. It still didn't allow him to make a bootable RAID-0 array the 3 Samsung NVMe sticks. We do know that, in theory, the key is not needed to make such a setup work, as a leaked version of Intel's RST allowed a bootable RAID-0 array in "30-day trial mode".We need to stop falling for Intel's nonsense. AMD's Threadripper is turning in better numbers in RAID-0 configurations, without all the nonsense of plugging in a hardware DRM dongle.

HStewart - Friday, February 16, 2018 - link

"We need to stop falling for Intel's nonsense. AMD's Threadripper is turning in better numbers in RAID-0 configurations, without all the nonsense of plugging in a hardware DRM dongle."Please stop the nonsense of fact less claims about AMD and provide actual proof about performance numbers. Keep in mind this SSD is an enterprise product designed for CPU's like Xeon not game machines.

peevee - Friday, February 16, 2018 - link

Like it.But idle power of 5W is kind of insane, isn't it?

Billy Tallis - Friday, February 16, 2018 - link

Enterprise drives don't try for low idle power because they don't want the huge wake-up latencies to demolish their QoS ratings.peevee - Friday, February 16, 2018 - link

4-drive RAID0 only overcomes 2-drive RAID0 by QD 512 . What kind of a server can run 612 threads at the same time? And what kind of server you will need for full 32 Ruler 1U backend (which would require 4192 threads to take advantage of all that power)?kingpotnoodle - Sunday, February 18, 2018 - link

One use could be shared storage for I/O intensive virtual environments, attached to multiple hypervisor nodes, each with multiple 40Gb+ NICs for the storage network.