Intel Xeon 7460: Six Cores to Bulldoze Opteron

by Johan De Gelas on September 23, 2008 12:00 AM EST- Posted in

- IT Computing

24 Cores in Action

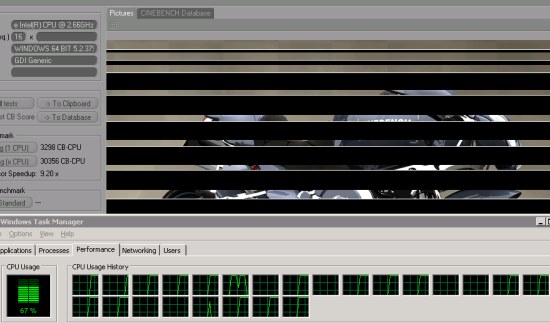

So how do you test 24 cores? This is not at all a trivial question! Many applications are not capable of using more than eight threads, and quite a few are limited to 16 cores. Just look at what happens if you try to render with Cinema4D on this 24-headed monster: (Click on the image for a clearer view.)

Yes, only 2/3 of the available processing power is effectively used. If you look closely, you'll see that only 16 cores are working at 100%.

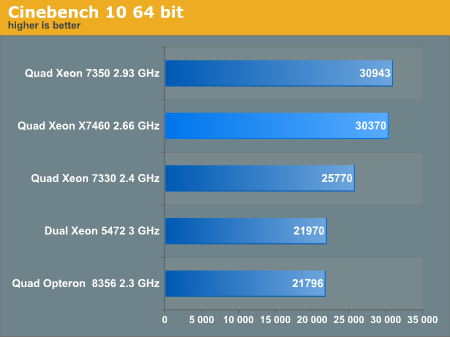

Cinebench is more than happy with the 3MB L2 cache, so adding a 16MB L3 has no effect whatsoever. The result is that only the improved Penryn core can improve the performance of the X7460. The sixteen 45nm Penryn cores at 2.66GHz are able to keep up with the sixteen 65nm Merom cores at 2.93GHz, but of course that is not good enough to warrant an upgrade. 3ds Max 2008 was no different, and in fact it was even worse:

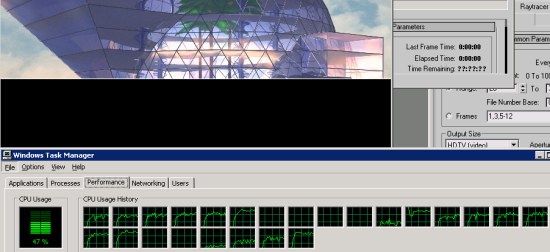

As we had done a lot of benchmarking with 3ds Max 2008, we wanted to see the new Xeon 7460 could do. The scanline renderer is the fastest for our ray-traced images, but it was not able to fully use 16 cores. Like Cinebench, it completely "forgot" to use the eight extra cores that our Xeon 7460 server offers. The results are very low, around 62 frames per hour, while a quad Xeon X7350 can do 88. As we have no explanation for this weird behavior, we didn't graph the results. We will have to take some time to investigate this further.

Even if we could get the rendering engines to work on 24 cores or more, it is clear that there are better ways to get good rendering performance. In most cases, it is much more efficient to simply buy less expensive servers and use Backburner to render several different images on separate servers simultaneously.

34 Comments

View All Comments

npp - Tuesday, September 23, 2008 - link

I didn't got this one very clear - why should a bigger cache reduce cache syncing traffic? With a bigger cache, you would have the potential risc of one CPU invalidating a larger portion of the data another CPU has already in its own cache, hence there would be more data to move between the sockets at the end. If we exaggerate this, every CPU having a copy of the whole main memory in its own cache would obviously lead to enormous syncing effort, not the oposite.I'm not familiar with the cache coherence protocol used by Intel on that platform, but even in the positive scenario of a CPU having data for read-only access in its own cache, a request from another CPU for the same data (the chance for this being bigger given the large cache size) may again lead to increased inter-socket communication, since these data won't be fetched from main memory again.

In all cases, inter-socket communication should be much cheaper than the cost of a main memory access, and it shifts the balance in the right direction - avoiding main memory as long as possible. And now it's clear why Dunnington is a six- rather than eight-core - more cores and less cache would yield a shift in the entirely opposite direction, which isn't what Intel is needing until QPI arrives.

narlzac85 - Wednesday, September 24, 2008 - link

In the best case scenario (I hope the system is smart enough to do it this way), with each VM having 4 CPU cores, they can keep all their threads on one physical die. This means that all 4 cores are working on the same VM/data and should need minimal access to data that another die has changed (if the hypervisor/hostOS processes jump around from core to core would be about it). The inter-socket cache coherency traffic will go down (in the older quad cores, since the 2 physical dual cores have to communicate over the FSB, it might as well have been the same as an 8 socket system populated by dual cores)Nyceis - Tuesday, September 23, 2008 - link

Can we post here now? :)JohanAnandtech - Wednesday, September 24, 2008 - link

Indeed. As the IT forums gave quite a few times trouble and we assume quite a few people do not comment in the IT forums as they have to register again. I am still searching for a good solution as these "comment boxes" get messy really quickly.Nyceis - Tuesday, September 23, 2008 - link

PS - Awesome article - makes me want hex-cores rather than quads in my Xen Servers :)Nyceis - Tuesday, September 23, 2008 - link

Looks like it :)erikejw - Tuesday, September 23, 2008 - link

Great article as always.However the performance / watt comparison is quite useless for virtualization systems though since they scale well at a multisystem level and for other reasons too

I won't hurt to make them but what users really care of is performance / dollar (for a lifetime)

Say the system will be in use for 3 years.

That makes the total powerbill for a 600W system about 2000$, less then the cost of one Dunnington and since the price difference between the Opteron and Dunnington cpus is like 4800$ you gotta be pretty ignorant to choose system with the performance / watt cost.

Lets say the AMD system costs 10000$ and the Intel 14800$(will be more due to Dimm differences) and have a 3 year life then the total cost for the systems and power will be 12000 and 16800.

That leaves us with a real basecost/transaction ratio of

Intel 5.09 : 4.25 AMD

AMD is hence 20% more cost effective than Intel in this case.

Any knowledgable buyer has to look at the whole picture and not at just one cost factor.

I hope that you include this in your other virtualization articles.

JohanAnandtech - Wednesday, September 24, 2008 - link

You are right, the best way to do this is work with TCO. We have done that in our Sun fir x4450 article. And the feedback I got was to calculate on 5 years, because that was more realistic.But for the rest I fully agree with you. Will do asap. How did you calculate the power bill?

erikejw - Wednesday, September 24, 2008 - link

Sounds good, will be interesting.The calculations was just a quick and dirty 600W 24/7 for 3 years and using current power prices.

VM servers are supposed to run like that.

It would also be interesting to see how the Dunnington responds when using more virtual cores than physical. Will the decline be less than the older Xeons?

What is a typical (core)load when it comes to this?

The Nehalems will respond more like the Athlons in this regard and not loose as much when the load increases, at a higher level than AMD though.

I realised the other day that it seems as AMD have built a servercpu that they take the best of and brings to the desktop market and Intel have done it the other way around.

The Nehalems architechture seems more "serverlike" but will make a bang on the desktop side too.

kingmouf - Thursday, September 25, 2008 - link

I think this is because they have (or should I say had) a different CPU that they wanted to cover that space, the Itanium. But now they are fully concentrated to x86, so...