SSD versus Enterprise SAS and SATA disks

by Johan De Gelas on March 20, 2009 2:00 AM EST- Posted in

- IT Computing

Configuration and Benchmarking Setup

First, a word of thanks. The help of several people was crucial in making this review happen:

- My colleague Tijl Deneut of the Sizing Server Lab, who spend countless hours together with me in our labs. Sizing Servers is an academic lab of Howest (University Ghent, Belgium).

- Roel De Frene of "Tripple S" (Server Storage Solutions), which lent us a lot of interesting hardware: the Supermicro SC846TQ-R900B, 16 WD enterprise drives, an Areca 1680 controller and more. S3S is a European company that focuses on servers and storage.

- Deborah Paquin of Strategic Communications, inc. and Nick Knupffer of Intel US.

As mentioned, S3S sent us the Supermicro SC846TQ-R900B, which you can turn into a massive storage server. The server features a 900W (1+1) power supply to power a dual Xeon ("Harpertown") motherboard and up to 24 3.5" hot-swappable drive bays.

We used two different controllers to avoid letting the controller color this review too much. When you are using up to eight SLC SSDs in RAID 0, where each disk can push up to 250 MB/s through the RAID card, it is clear that the HBA can make a difference. Our two controllers are:

Adaptec 5805 SATA-II/SAS HBA

Firmware 5.2-0 (16501) 2009-02-18

1200MHz IOP348 (Dual-core)

512MB 533MHz/ECC (Write-Back)

ARECA 1680 SATA/SAS HBA

Firmware v1.46 2009-1-6

1200MHz IOP348 (Dual-core)

512MB 533MHz/ECC (Write-Back)

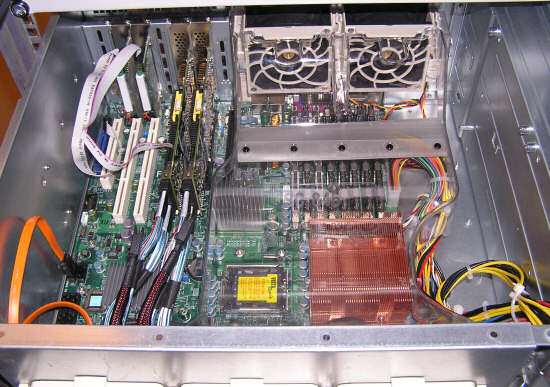

Both controllers use the same I/O CPU and more or less the same cache configuration, but the firmware will still make a difference as you will see further. Below you can see the inside of our Storage server, featuring:

- 1x quad-core Harpertown E5420 2.5GHz and X5470 3.3GHz

- 4x2GB 667MHz FB-DIMM (the photo shows it equipped with 8x2GB)

- Supermicro X7DBN mainboard (Intel 5000P "Blackford" Chipset)

- Windows 2003 SP2

The small 2.5" SLC drives are plugged in the large 3.5" cages:

We used the following disks:

- Intel SSD X25-E SLC SSDSA2SH032G1GN 32GB

- WDC WD1000FYPS-01ZKB0 1TB (SATA)

- Seagate Cheetah 15000RPM 300GB ST3300655SS (SAS)

Next is the software setup.

67 Comments

View All Comments

JohanAnandtech - Friday, March 20, 2009 - link

If you happen to find out more, please mail me (johan@anandtech.com). I think this might have to do something with the inner working of SATA being sligthly less efficient than the SAS protocol and the Adaptec firmware, but we have to do a bit of research on this.Thanks for sharing.

supremelaw - Friday, March 20, 2009 - link

Found this searching for "SAS is bi-directional" --http://www.sbsarchive.com/search.php?search=parago...">http://www.sbsarchive.com/search.php?se...&src...

quoting:

> Remember, one of the main differences between (true) SAS and SATA is

> that in the interface, SAS is bi-directional, while SATA can only

> send Data in one direction at a time... More of a bottle-neck than

> often thought.

Thus, even though an x1 PCI-E lane has a theoretical

bandwidth of 250MB/sec IN EACH DIRECTION, the SATA

protocol may be preventing simultanous transmission

in both directions.

I hope this helps.

MRFS

supremelaw - Friday, March 20, 2009 - link

NOW!http://www.dailytech.com/article.aspx?newsid=14630">http://www.dailytech.com/article.aspx?newsid=14630

[begin quoteS]

Among the offerings are a 16GB RIMM module and an 8GB RDIMM module. The company introduced 50nm 2Gb DDR3 for PC applications last September. The 16GB modules operate at 1066Mbps and allow for a total memory density of 192GB in a dual socket server.

...

In late January 2009, Samsung announced an even higher density DRAM chip at 4Gb that needs 1.35 volts to operate and will be used in 16GB RDIMM modules as well as other applications for desktop and notebook computers in the future. The higher density 4Gb chips can run at 1.6Gbps with the same power requirements as the 2Gb version running at 1066Mbps.

[end quote]

supremelaw - Friday, March 20, 2009 - link

http://techreport.com/articles.x/16255">http://techreport.com/articles.x/16255The ACARD ANS-9010 can be populated with 8 x 2GB DDR2 DIMMS;

and, higher density SDRAM DIMMS should be forthcoming from

companies like Samsung in the coming months (not coming years).

4GB DDR2 DIMMs are currently available from G.SKILL, Kingston

et al. e.g.:

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.a...tem=N82E...

I would like to see a head-to-head comparison of Intel's SSDs

with various permutations of ACARD's ANS-9010, particularly

now that DDR2 is so cheap.

Lifetime warranties anybody? No degradation in performance either,

after high-volume continuous WRITEs.

Random access anybody? You have heard of the Core i7's triple-

channel memory subsystems, yes? starting at 25,000 MB/second!!

Yes, I fully realize that SDRAM is volatile, and flash SSDs are not:

there are solutions for that problem, some of which are cheap

and practical e.g. dedicate an AT-style PSU and battery backup

unit to power otherwise volatile DDR2 DIMMs.

Heck, if the IT community cannot guarantee continuous

AC input power, where are we after all these years?

Another alternative is to bulk up on the server's memory

subsystem, e.g. use 16GB DIMMs from MetaRAM, and implement

a smart database cache using SuperCache from SuperSpeed LLC:

http://www.superspeed.com/servers/supercache.php">http://www.superspeed.com/servers/supercache.php

Samsung has recently announced larger density SDRAM chips,

not only for servers but also for workstations and desktops.

I predict that these higher density modules will also

show up in laptop SO-DIMMs, before too long. There are a

lot of laptop computers in the world presently!

After investigating the potential of ramdisks for myself

and the entire industry, I do feel it is time that their

potential be taken much more seriously and NOT confined

to the "bleeding edge" where us "wild enthusiasts"

tend to spend a lot of our time.

And, sadly, when I attempted to share some original ideas and

drawings with Anand himself, AT THE VERY SAME TIME

an attempt was made to defame me on the Internet --

by blaming me for some hacker who had penetrated

the homepage of our website. Because I don't know

JAVASCRIPT, it took me a few days to isolate that

problem, but it was fixed as soon as it was identified.

Now, Anand has returned those drawings, and we're

back where we started before we approached him

with our ideas.

I conclude that some anonymous person(s) did NOT

want Anand seeing what we had to share with him.

Your comments are most appreciated!

Paul A. Mitchell, B.A., M.S.

dba MRFS (Memory-Resident File Systems)

masterbm - Friday, March 20, 2009 - link

Still looks like their is bottleneck in raid controller in 66% read and 33% write test. The one ssd two ssd comperseionmcnabney - Friday, March 20, 2009 - link

The article does seem to skew at the end. The modeled database had to fit in a limited size for the SSD solution to really shine.So the database size that works well with SSD is one that requires more space than can fit into RAM and less than 500GB when the controllers top-out. That is a pretty tight margin and potentially can run into capacity issues as databases grow.

Also, in the closing cost chart there is no cost per GB per year line. The conclusion indicates that you can save a couple thousand with a 500GB eight SSD solution versus a twenty SAS solution, but how money eight SSD servers will you need to buy to equal the capacity and functionality of one SAS server.

I would have liked to see the performance/cost of a server that could hold the same size database in RAM.

JohanAnandtech - Friday, March 20, 2009 - link

"So the database size that works well with SSD is one that requires more space than can fit into RAM and less than 500GB when the controllers top-out. That is a pretty tight margin and potentially can run into capacity issues as databases grow. "That is good and pretty astute observation, but a few remarks. First of all, EMC and others use Xeons as storage processor. Nothing is going to stop the industry from using more powerful storage processors for the SLC drives than the popular IOP348.

Secondly, 100 GB SLC (and more) drives are only a few months away.

Thirdly, AFAIK keeping the database in RAM does not mean that a transactional database does not have to write to the storage system, if you want a fully ACID compliant database.

mikeblas - Friday, March 20, 2009 - link

I can't seem to find anything about the hardware where the tests were run. We're told the database is 23 gigs, but we don't know the working set size of the data that the benchmark touches. Is the server running this test already caching a lot in memory? How much?A system that can hold the data in memory is great--when the data is in memory. When the cache is cold, then it's not doing you any good and you still need to rely on IOPS to get you through.

JohanAnandtech - Friday, March 20, 2009 - link

http://it.anandtech.com/IT/showdoc.aspx?i=3532&...">http://it.anandtech.com/IT/showdoc.aspx?i=3532&...Did you miss the info about the bufferpool being 1 GB large? I can try to get you the exact hitrate, but I can tell you AFAIK the test goes over the complete database (23 GB thus), so the hitrate of the 1 GB database will be pretty low.

Also look at the scaling of the SAS drives: from 1 SAS to 8, we get 4 times better performance. That would not be possible if the database was running from RAM.

mikeblas - Friday, March 20, 2009 - link

I read it, but it doesn't tell the whole story. The scalability you see in the results would be attainable if a large cache were not being filled, or if the cache was being dumped within the test.The graphs show transactions per second, which tell us how well the database is performing. The charts don't show us how well the drives are performing; to understand that, we'd need to see IOPS, among some other information. I'd expect that the maximum IOPS in the test is not nearly the maximum IOPS of the drive array. Since the test wasn't run for any sustained time, the weaknesses of the drive are not being exposed.