OCZ's Fastest SSD, The IBIS and HSDL Interface Reviewed

by Anand Lal Shimpi on September 29, 2010 12:01 AM ESTTake virtually any modern day SSD and measure how long it takes to launch a single application. You’ll usually notice a big advantage over a hard drive, but you’ll rarely find a difference between two different SSDs. Present day desktop usage models aren’t able to stress the performance high end SSDs are able to deliver. What differentiates one drive from another is really performance in heavy multitasking scenarios or short bursts of heavy IO. Eventually this will change as the SSD install base increases and developers can use the additional IO performance to enable new applications.

In the enterprise market however, the workload is already there. The faster the SSD, the more users you can throw at a single server or SAN. There are effectively no limits to the IO performance needed in the high end workstation and server markets.

These markets are used to throwing tens if not hundreds of physical disks at a problem. Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things.

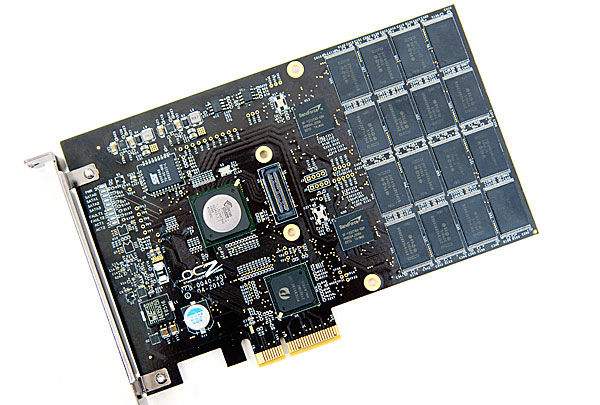

The appetite for performance is so great that many enterprise customers are finding the limits of SATA unacceptable. While we’re transitioning to 6Gbps SATA/SAS, for many enterprise workloads that’s not enough. Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers.

The OCZ RevoDrive, two SF-1200 controllers in RAID on a PCIe card

OCZ has been toying in this market for a while. The zDrive took four Indilinx controllers and put them behind a RAID controller on a PCIe card. The more recent RevoDrive took two SandForce controllers and did the same. The RevoDrive 2 doubles the controller count to four.

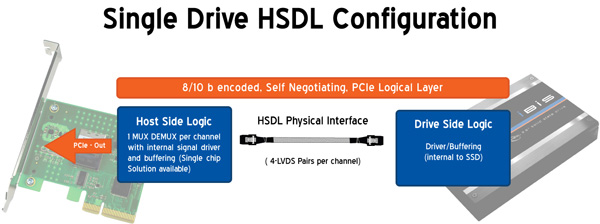

Earlier this year OCZ announced its intention to bring a new high speed SSD interface to the market. Frustrated with the slow progress of SATA interface speeds, OCZ wanted to introduce an interface that would allow greater performance scaling today. Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of aggregate bandwidth to a single SSD. It’s an absolutely absurd amount of bandwidth, definitely more than a single controller can feed today - which is why the first SSD to support it will be a multi-controller device with internal RAID.

Instead of relying on a SATA controller on your motherboard, HSDL SSDs feature a 4-lane PCIe SATA controller on the drive itself. HSDL is essentially a PCIe cable standard that uses a standard SAS cable to carry a 4 PCIe lanes between a SSD and your motherboard. On the system side you’ll just need a dumb card with some amount of logic to grab the cable and fan the signals out to a PCIe slot.

The first SSD to use HSDL is the OCZ IBIS. As the spiritual successor to the Colossus, the IBIS incorporates four SandForce SF-1200 controllers in a single 3.5” chassis. The four controllers sit behind an internal Silicon Image 3124 RAID controller. This is the same controller used in the RevoDrive which is natively a PCI-X controller, picked to save cost. The 1GB/s of bandwidth you get from the PCI-X controller is routed to a Pericom PCIe x4 switch. The four PCIe lanes stemming from the switch are sent over the HSDL cable to the receiving card on the motherboard. The signal is then grabbed by a chip on the card and passed through to the PCIe bus. Minus the cable, this is basically a RevoDrive inside an aluminum housing. It's a not-very-elegant solution that works, but the real appeal would be controller manufacturers and vendors designing native PCIe-to-HSDL controllers.

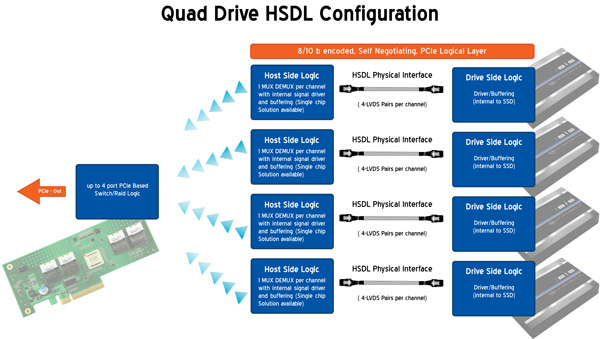

OCZ is also bringing to market a 4-port HSDL card with a RAID controller on board ($69 MSRP). You’ll be able to raid four IBIS drives together on a PCIe x16 card for an absolutely ridiculous amount of bandwidth. The attainable bandwidth ultimately boils down to the controller and design used on the 4-port card however. I'm still trying to get my hands on one to find out for myself.

74 Comments

View All Comments

jwilliams4200 - Wednesday, September 29, 2010 - link

Anand:I suspect your resiliency test is flawed. Doesn't HD Tach essentially write a string of zeros to the drive? And a Sandforce drive would compress that and only write a tiny amount to flash memory. So it seems to me that you have only proved that the drives are resilient when they are presented with an unrealistic workload of highly compressible data.

I think you need to do two things to get a good idea of resiliency:

(1) Write a lot of random (incompressible) data to the drive to get it "dirty"

(2) Measure the write performance of random (incompressible) data while the SSD is "dirty"

It is also possible to combine (1) and (2) in a single test. Start with a "clean" SSD, then configure IO meter to write incompressible data continuously over the entire SSD span, say random 4KB 100% write. Measure the write speed once a minute and plot the write speed vs. time to see how the write speed degrades as the SSD gets dirty. This is a standard test done by Calypso system's industrial SSD testers. See, for example, the last graph here:

http://www.micronblogs.com/2010/08/setting-a-new-b...

Also, there is a strange problem with Sandforce-controlled "dirty" SSDs having degraded write speed which is not recovered after TRIM, but it only shows up with incompressible data. See, for example:

http://www.bit-tech.net/hardware/storage/2010/08/1...

Anand Lal Shimpi - Wednesday, September 29, 2010 - link

It boils down to write amplification. I'm working on an article now to quantify exactly how low SandForce's WA is in comparison to other controller makers using methods similar to what you've suggested. In the case of the IBIS I'm simply trying to confirm whether or not the background garbage collection works. In this case I'm writing 100% random data sequentially across the entire drive using iometer, then peppering it with 100% random data randomly across the entire drive for 20 minutes. HDTach is simply used to measure write latency across all LBAs.I haven't seen any issues with SF drives not TRIMing properly when faced with random data. I will augment our HDTach TRIM test with another iometer pass of random data to see if I can duplicate the results.

Take care,

Anand

jwilliams4200 - Wednesday, September 29, 2010 - link

What I would like to see is SSDs with a standard mini-SAS2 connector. That would give a bandwidth of 24 Gbps, and it could be connected to any SAS2 HBA or RAID card. Simple, standards-compliant, and fast. What more could you want?Well, inexpensive would be nice. I guess putting a 4x SAS2 interface in an SSD might be expensive. But at high volume, I would guess the cost could be brought down eventually.

LancerVI - Wednesday, September 29, 2010 - link

I found the article to be interesting. OCZ introducing a new interconnect that is open for all is interesting. That's what I took from it.It's cool to see what these companies are trying to do to increase performance, create new products and possibly new markets.

I think most of you missed the point of the article.

davepermen - Thursday, September 30, 2010 - link

problem is, why?there is NO use of this. there are enough interconnects existing. enough fast, they are, too. so, again, why?

oh, and open and all doesn't matter. there won't be any products besides some ocz stuff.

jwilliams4200 - Wednesday, September 29, 2010 - link

Anand:After reading your response to my comment, I re-read the section of your article with HD Tach results, and I am now more confused. There are 3 HD Tach screenshots that show average read and write speeds in the text at the bottom right of the screen. In order, the avg read and writes for the 3 screenshots are:

read 201.4

write 233.1

read 125.0

write 224.3

"Note that peak low queue-depth write speed dropped from ~233MB/s down to 120MB/s"

read 203.9

write 229.2

I also included your comment from the article about write speed dropping. But are the read and write rates from HD Tach mixed up?

Anand Lal Shimpi - Wednesday, September 29, 2010 - link

Ah good catch, that's a typo. On most drives the HDTach pass shows impact to write latency, but on SF drives the impact is actually on read speed (the writes appear to be mostly compressed/deduped) as there's much more data to track recover since what's being read was originally stored in its entirety.Take care,

Anand

jwilliams4200 - Wednesday, September 29, 2010 - link

My guess is that if you wrote incompressible data to a dirty SF drive, that the write speed would be impacted similarly to the impact you see here on the read speed.In other words, the SF drives are not nearly as resilient as the HD Tach write scans show, since, as you say, the SF controller is just compressing/deduping the data that HD Tach is writing. And HD Tach's writes do not represent a realistic workload.

I suggest you do an article revisiting the resiliency of dirty SSDs, paying particular attention to writing incompressible data.

greggm2000 - Wednesday, September 29, 2010 - link

So how will Lightpeak factor into this? Is OCZ working on a Lightpeak implementation of this? One hopes that OCZ and Intel are communicating here..jwilliams4200 - Wednesday, September 29, 2010 - link

The first lightpeak cables are only supposed to be 10 Gbps. A mini-SAS2 cable has four lanes of 6 Gbps for a total of 24 Gbps. lightpeak loses.