AMD Frame Pacing Explored: Catalyst 13.8 Brings Consistency to Crossfire

by Ryan Smith on August 1, 2013 2:00 PM ESTIn Summary: The Frame Pacing Problem

Before we dive into the technical details of AMD’s frame pacing mechanism and our results, we’re going to spend a moment recapping the basis of the frame pacing problem. So if you haven’t been keeping up with this issue, please read on, otherwise feel free to jump a page.

In brief, in multi-GPU setups, be it single-card products like the GTX 690 or multiple cards such as a pair of 7970s, the primary mode of splitting up work is a process called Alternate Frame Rendering (AFR). In AFR, rather than have multiple GPUs working on a single frame, each GPU gets its own frame. This method has over time proven to be the most reliable method, as attempting to split up a single frame over multiple GPUs (with their relatively awful interconnect) has proven to be unreliable and difficult to get working. AFR in contrast is by no means perfect and has to deal with inter-frame dependency issues – where the next frame relies in part on the previous frame – but this is still easier to implement and more consistent than previous efforts at splitting frames.

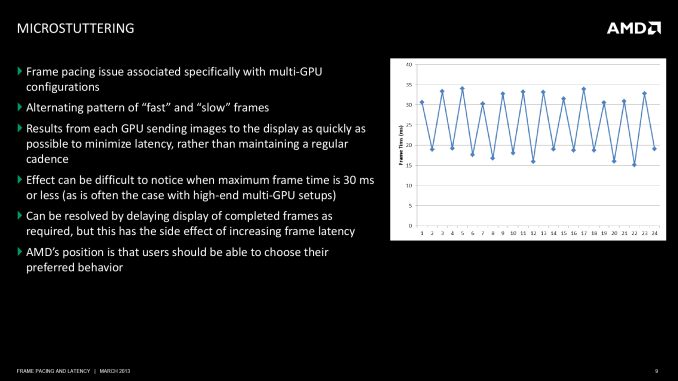

However due to the mechanisms of AFR, left unattended it can significantly impact the intervals between frames and consequently whether stuttering is perceived. To do AFR well it’s necessary to pace the output of each GPU such that each GPU is delivering a rendered frame at as even a rate as possible; not too soon after the previous frame, and not too late such that the following frame comes up quickly. In a 2 GPU setup, which is going to be the most common, this means the second GPU needs to produce a finished frame when the first GPU is roughly half-way done with its current frame. Should this fail to happen then we have poorly paced frames that will result in perceived micro-stuttering.

Micro-stuttering has been a longstanding issue on multi-GPU setups. Both NVIDIA and AMD have worked on the issue to various degrees, but at the end of the day multi-GPU setups have never proven to be as reliable as single-GPU setups, which is why our editorial position on the matter has been to always favor single powerful GPUs over multiple GPUs when at all possible. Consequently it’s impractical to fully solve micro-stuttering and achieve frame pacing consistency on level with single-GPU setups, but it’s still possible to improve on previous methods and achieve a level of frame pacing that is reasonably effective and “good enough” for most needs. This is what AMD has been focusing on for the past few months.

Moving on, how AMD ended up in this situation is effectively the combination of three factors. The first of course being the innate technical challenged posed by AFR, while the second and third factors have been a poorly realized position on lag vs. consistency and a failure of competitive analysis respectively.

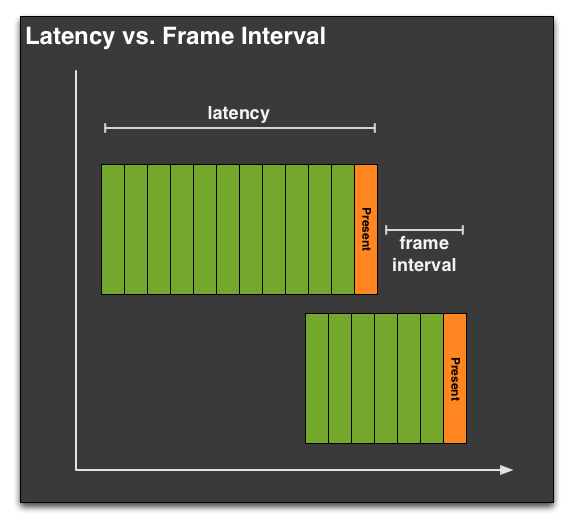

On the former, AMD’s position up until now has been that they’ve favored minimizing input lag in their designs. If you need to hold back a frame to better pace it, then you are by definition introducing some input lag, a quality that is generally undesirable to a user base that usually avoids mechanisms like v-sync for that reason. AMD’s position hasn’t been wrong of course, but it has come at the exclusion of allowing a bit of input lag to better manage frame pacing. AMD’s decision then has been to lighten up on this position and dedicate the resources to deal with both approaches. AMD would introduce advanced frame pacing as an optional control, while leaving the simpler, less laggy approach as another option.

Meanwhile the story with competitive analysis is far less complex. Simply put, AMD wasn’t testing for frame pacing as part of their standard competitive analysis, so when these results first broke AMD was caught flat-footed. This is a business failure rather than a technical failure, which makes it easy enough to resolve. But it’s also the reason why AMD needed time to develop an advanced frame pacing mechanism, as they had never seen the need to develop one before.

Ultimately this is a problem that should have never happened, and it is unfortunate that AMD let it come to this. At the same time however we believe it’s never too late for redemption, and AMD has been making all of the right moves to try to achieve that. They have been clear about their failures and shortcomings, including their frustrations that they’ve left performance on the table by not looking for these issues, and they have been equally clear in laying out a plan for how they would go about fixing all of this. So today we will finally get to see first-hand whether AMD’s initial efforts for resolving frame pacing in multi-GPU setups has paid off.

102 Comments

View All Comments

chizow - Friday, August 2, 2013 - link

That makes sense, but I guess the bigger concern from the outset was how AMD's allowance of runtframes/microstutter in an "all out performance" mentality might have overstated their performance. You found in your review that AMD performance typically dropped 5-10% as a result of this fix, that should certainly be considered, especially if AMD isn't doing a good job of making sure they implement this frame time fix across all their drivers, games, APIs etc.Also, any word whether this is a driver-level fix or an game-specific profile optimization (like CF, SLI, AA profiles)?

Ryan Smith - Friday, August 2, 2013 - link

The performance aspect is a bit weird. To be honest I'm not sure why performance was up with Cat 13.6 in the first place. For a mature platform like Tahiti it's unusual.As for the fix, AMD has always presented it as being a driver level fix. Now there are still individual game-level optimizations - AMD is currently trying to do something about Far Cry 3's generally terrible consistency, for example (an act I'm convinced is equivalent to parting the Red Sea) - but the basic frame pacing mechanism is universal.

Thanny - Thursday, August 1, 2013 - link

Perhaps this will be the end of the ludicrous "runt" frame concept.All frames with vsync disabled are runts, since they are never completely displayed. With a sufficiently fast graphics card and/or sufficiently less complex game, every frame will be a runt even by the arbitrary definitions you find at sites like this.

And all the while, nothing at all is ever said about the most hideous artifact of all - screen tearing.

Asik - Thursday, August 1, 2013 - link

There is a simple and definite fix for tearing artifacts and you mention it yourself - vsync. If screen tearing bothers you, and I think it should bother most people, you should keep vsync on at all times.chizow - Thursday, August 1, 2013 - link

Vsync or frame limiters are certainly workarounds, but it also introduces input lag and largely negates the benefit of having multiple powerful GPUs to begin with. A 120Hz monitor would increase the headroom for Vsync, but also by nature reduces the need for Vsync (there's much less tearing).krutou - Friday, August 2, 2013 - link

Triple buffering solves tearing without introducing significant input lag. VSync is essentially triple buffering + frame limiter + timing funny business.I have a feeling that Nvidia's implementation of VSync might actually not have input lag due to their frame metering technology.

Relevant: http://www.anandtech.com/show/2794/3

chizow - Saturday, August 3, 2013 - link

Yes this is certainly true, when I was on 60Hz I would always enable Triple Buffering when available, however, TB isn't the norm and few games implemented it natively. Even fewer implemented it correctly, most use a 3 frame render ahead queue, similar to the Nvidia driver forcing it which is essentially a driver hack for DX.Having said all that, TB does still have some input lag even at 120Hz even with Nvidia Vsync compared to 120Hz without Vsync (my preferred method of gaming now when not using 3D).

vegemeister - Monday, August 5, 2013 - link

The amount of tearing is independent the refresh rate of your monitor. If you have vsync off, every frame rendered creates a tear line. If you are drawing frames at 80Hz without vsync, you are going to see a tear every 1/80 of a second no matter what the refresh rate of your screen is. The only difference is that a 60Hz screen would occasionally have two tear lines on screen at once.chizow - Thursday, August 1, 2013 - link

Sorry, not even remotely close to true. Runt frames were literally tiny shreds of frames followed by full frames, unlike normal screen tearing with Vsync off that results in 1/3 or more of the frame being updated at a time, consistently.The difference is, one method does provide the impression of fluidity and change from one frame to the next (with palpable tearing) whereas runt frames are literally worthless unless you think 3-4 rows worth of image followed by full images provides any meaningful sense of motion.

I do love the term "runt frame" though, an anachronism in the tech world born of AMD's ineptitude with regard to CrossFire. I for one will miss it.

Thanny - Thursday, August 1, 2013 - link

You're not making sense. All frames with vsync off are partial. The frame buffer is replaced in the middle of screen updates, so no rendered frame is ever displayed completely.A sense of motion is achieved by displaying different frames in a time sequence. It has nothing to do with showing parts of different frames in the same screen refresh.

And vsync adds a maximum latency of the inverse of the screen refresh (16.67ms for a 60Hz display). On average, it will be half that. If you have a very laggy monitor (Overdrive-TN, PVA, or MVA panel types), that tiny bump from vsync might push the display lag to noticeability. For plain TN and IPS panels (not to mention CRT), there will be no detectable display lag with vsync on.