Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

The Server CPU Temperatures

Given Intel's dominance in the server area, we will focus on the Intel Xeons. The "normal", non-low power, Xeons have a specified Tcase of 75°C (167 °F, 95 W) to 88°C (190 °F, 130 W). Tcase is the temperature measurement using a thermocouple embedded in the center of the heat spreader, so there is a lot of temperature headroom. The low power Xeons (70 W TDP or less) have a lot less headroom as the Tcase is a pretty low 65°C (149 °F). But since those Xeons produce a lot less heat, it should be easier to keep them at lower temperatures. In all cases, there is quite a bit of headroom.

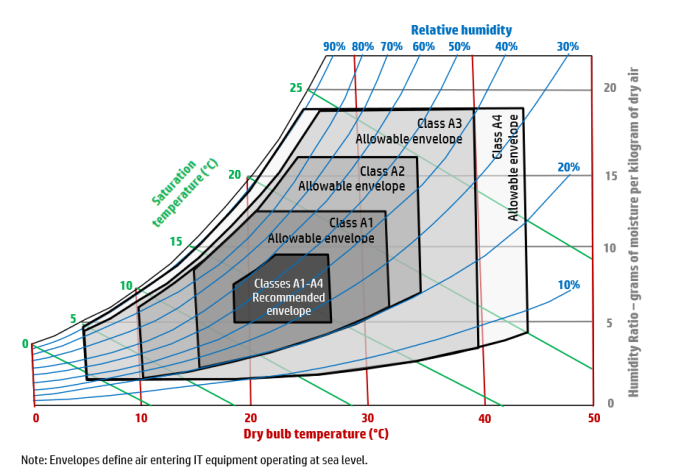

But there is more than the CPU of course; the complete server must be up for running with higher temperatures. That is where the ASHRAE specifications come in. The American Society of Heating, Refrigeration, and Air conditioning Engineers publishes guidelines for the temperature and humidity operating ranges of IT equipment. If vendors comply with these guidelines, administrators can be sure that they will not void warranties when running servers at higher temperatures. Most vendors - including HP and DELL - now allow the inlet temperature of a server to be as high as 35 °C, the so called A2 class.

ASHRAE specifications per class

The specified temperature is the so called "dry bulb" temperature, which is the normal measured temperature by a dry thermometer. Humidity should be approximately between 20 and 80%. Specially equipped servers (Class A4) can go as high as 45°C with humidity being between 10 and 90%.

It is hard to overestimate the impact of servers being capable of breathing hotter air. In modern data centers this ability could be the difference between being able to depend on free cooling only, or having to continue to invest in very expensive chilling installations. Being able to use free cooling comes with both OPEX and CAPEX savings. In traditional data centers, this allows administrators to raise the room temperature and decrease the amount of energy the cooling requires.

And last but not least, it increases the time before a complete shutdown is necessary when the cooling installation fails. The more headroom you get, the easier it is to fix the cooling problems before critical temperatures are reached and the reputation of the hosting provider is tarnished. In a modern data center, it is almost the only way to run most of the year with free cooling.

Raising the inlet temperature is not easy when you are providing hosting for many customers (i.e. a "multi-tenant data center"). Most customers resist warmer data centers, with good reason in some cases. We watched a 1U server use 80 Watt to power its fans on a total of less than 200 Watt! In that case, the savings of the data center facility are paid by the energy losses of the IT equipment. It's great for the data center's PUE, but not very compelling for customers.

But how about the latest servers that support much higher inlet temperatures? Supermicro claims their servers can work with up to 47°C inlet temperatures. It's time to do what Anandtech does best and give you facts and figures so you can decide if higher temperatures are viable.

48 Comments

View All Comments

extide - Tuesday, February 11, 2014 - link

Yeah there is a lot of movement in this these days, but the hard part of doing this is at the low voltages used in servers <=24v, you need a massive amount of current to feed several racks of servers, so you need massive power bars and of course you can lose a lot of efficiency on that side as well.drexnx - Tuesday, February 11, 2014 - link

afaik, the Delta DC stuff is all 48v, so a lot of the old telecom CO stuff is already tailor-made for use there.but yes, you get to see some pretty amazing buswork as a result!

Ikefu - Tuesday, February 11, 2014 - link

Microsoft is building a massive data center in my home state just outside Cheyenne, WY. I wonder why more companies haven't done this yet? Its very dry and days above 90F are few and far between in the summer. Seems like an easy cooling solution versus all the data centers in places like Dallas.rrinker - Tuesday, February 11, 2014 - link

Building in the cooler climes is great - but you also need the networking infrastructure to support said big data center. Heck for free cooling, build the data centers in the far frozen reaches of Northern Canada, or in Antarctica. Only, how will you get the data to the data center?Ikefu - Tuesday, February 11, 2014 - link

Its actually right along the I-80 corridor that connects Chicago and San Francisco. Several major backbones run along that route and its why many mega data centers in Iowa are also built along I-80. Microsoft and the NCAR Yellowstone super computer are there so the large pipe is definitely accessible.darking - Tuesday, February 11, 2014 - link

We've used free cooling in our small datacenter since 2007. Its very effective from september to april here in Denmark.beginner99 - Tuesday, February 11, 2014 - link

That map from Europe is certainly plain wrong. Especially in Spain btu also Greece and italy easily have some day above 35. It also happens couple of days per year were I live, a lot more north than any of those.ShieTar - Thursday, February 13, 2014 - link

Do you really get 35°C, in the shade, outside, for more than 260 hours a year? I'm sure it happens for a few hours a day in the two hottest months, but the map does cap out at 8500 out of 8760 hours.juhatus - Tuesday, February 11, 2014 - link

What about wear&tear at running the equipment at hotter temperatures? I remember seeing the chart where higher temperature = shorter life span. I would imagine the OEM's have engineered a bit over this and warranties aside, it should be basic physics?zodiacfml - Wednesday, February 12, 2014 - link

You just need constant temperature and equipment that works at that temperature. Wear and tear happens significantly at temperature changes.