The Samsung Exynos 7420 Deep Dive - Inside A Modern 14nm SoC

by Andrei Frumusanu on June 29, 2015 6:00 AM ESTThe Exynos 7420 - Inside a Modern SoC

At this point in time it’s undeniable that the Exynos 7420 seems to have a clear process advantage over the current competition, but before we go into more benchmarks and detailed power numbers, I’d like to take the opportunity to try to do something we haven’t done before: A dissection of what is actually inside of a modern SoC such as the Exynos 7420.

Over the past few years SoCs have grown more complex and transistor counts have shot up, but we rarely had the occasion to look into what kind of blocks are actually included in such large designs. PR material provided by companies often just include rough simplifications such as CPU core counts or GPU configurations. Some companies such as Qualcomm are even hesitant to give any kind of information on their IP – the Adreno GPU for example remains a mysterious black box when it comes to its architecture. Samsung SoC’s primary processing blocks are relatively well known because they use IP designed by ARM, with whom we have the opportunity and pleasure to extensively cover in articles such as our architectural deep-dives on the Mali Midgard design or ARM’s A53/A57 CPUs. While we feel we have a good understanding of the CPU and GPU, we know little of the remaining SoC components as they never get talked about.

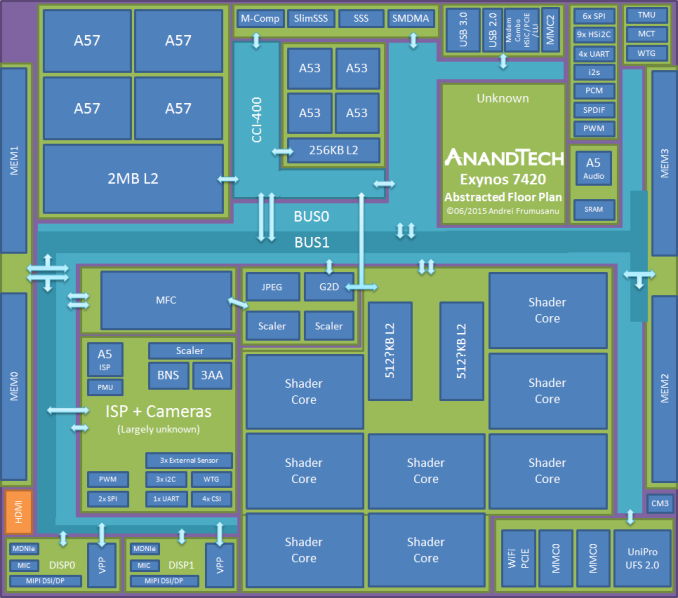

Unfortunately when asking Samsung SLSI about details of the Exynos SoCs, LSI could not publicly comment on the architecture or details of current products. In order to try and learn more about this subject, I tried to reverse-engineer myself through the various IP blocks to re-create a high-level abstracted overview of what the SoC looks like.

Before going into talking about the different blocks and layout, I’d like put a disclaimer out there that this graphic is purely an abstracted plan of the true physical layout. I did have access to a die shot of the chipset to base my analysis on, but we are unable to post this die shot. Blocks such as the GPU, CPUs and memory controllers can be considered to be representative of their actual size and location, other blocks such as the ISP and the top right quadrant are large functional simplifications for the purpose of presentation.

As mentioned in the manufacturing process section, the Exynos 7420 remains a relatively small SoC as it comes in at only 78mm². The biggest IP block is by far the Mali T760 GPU cluster sized at 17.7mm², consuming 22.6% of the SoC, nearly a quarter of the whole die. Historically speaking, this falls in line with what Samsung has previously budgeted for the GPU since the Exynos 5420. The individual shader cores are among one of the largest individual blocks on the SoC as they come in at 1.75mm². All 8 cores are connected via a common fabric and two islands of L2 cache. Samsung has officially disclosed the Exynos 5433’s Mali GPU to come with 512KB of total L2 cache – and size comparisons between the cache islands and shader cores of the two SoCs point out that the Exynos 7420 has a quite larger L2 to shader core ratio, pointing out to the possibility that its size may have doubled up to 512KB per MMU for a total of 1MB. We unfortunately won't know for sure until Samsung eventually releases more information on the Exynos 7420.

Moving on to other IP blocks we have concrete information on, we see the Cortex A57 “big” CPU cluster positioned in one corner of the SoC, largely opposite of the GPU. Again falling back to information released during ISSCC 2015, Samsung explains that this positioning is done for the best thermal management of the SoC. Having the two most power-consuming blocks as far from each other makes sense to try to keep hot-spots to a minimum and maximizing the thermal dissipation potential of the whole SoC.

The Cortex A53 “little” cluster is located right next to the A57 cluster. We see the same configuration as on the 5433 – four A53 cores with 256KB of L2 cache. The 14nm die shrink made it possible to make this the currently smallest modern 4-core cluster among existing SoCs as it comes in at only 2.71mm².

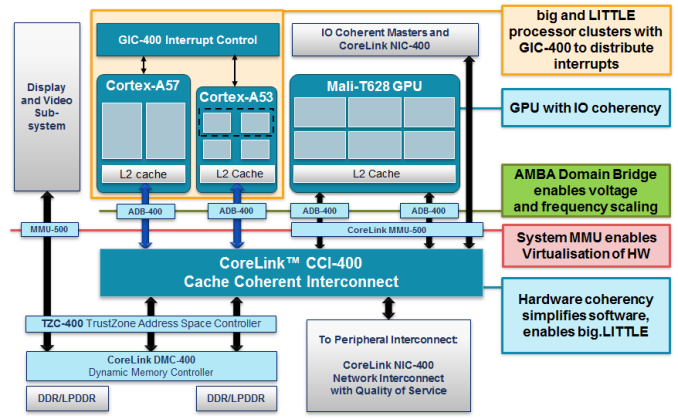

Between the two CPU clusters we find the ARM Cache Coherent Interconnect (CCI-400) that is the core IP that allows heterogeneous multiprocessing between different CPU architectures and is the corner-stone of big.LITTLE SoCs. Besides the CPU clusters, the CCI-400 can also connect three further slave IP blocks to form a group of cache coherent devices. This is the point where things get interesting; there is a general lack of public information from semiconductor vendors on how the CCI and general internal bus architecture looks like. For the Exynos 7420 I was able to confirm at least four of the five possible ports on the CCI.

Again, we have the obvious two CPU clusters each occupying a port on the CCI which is required for heterogeneous and simultaneous operation system of the two clusters. As further CCI slave devices, Samsung chooses to connect the G2D block (On the same port as the GPU) whose full name goes by FIMG2D (Fully Integrated Mobile Graphics 2D), which is the 2D graphics accelerator of Exynos SoCs. The G2D block is part of a larger block dedicated to 2D image manipulation called the MSCL – which is an acronym for M-Scaler although I’m not too sure what the M stands for, maybe Media? The overall block contains two dedicated hardware fixed-function image scalers as well as a JPEG compression and decompression unit. For example video streams will pass through this block to be re-scaled to a display’s resolution.

Another CCI-connected block is a new kind of IP that we haven’t seen before in the mobile space: a memory compressor. Blandly named “Exynos Memory Compressor” or M-Comp this is an interesting specialized piece of IP that has yet to play a role in the Galaxy S6. I’m quite certain this is a hardware block targeted and designed especially for Android. Since Android 4.4 kernel DRAM compression mechanisms have been a validated part of the OS and all devices come with a form or another of the feature. Most vendor devices come with the “zram” mechanism, which is a ramdisk with compression support. The kernel sets this up as a swapping device to store rarely used memory pages. Samsung had implemented this in its Galaxy devices as far back as Android 4.1.

The Galaxy S6 makes use of a more advanced implementation called “zswap” which is able to compress memory pages before they need to get swapped out to a swap device, so it’s a more optimized mechanism that sits closer to the kernel’s memory management core. An everyday example of its effects can be seen when multi-tasking a few apps: a sample readout shows it's able to compress 1.21GB of pages into 341MB of physical memory. Being able to offload the compression to a dedicated hardware block would be a great power efficiency optimization, so we’re hopefully looking forward to a future release and OS update of the device with a software stack that can use the hardware unit.

The memory compressor should be part of a larger block called “IMEM” which contains other elements such as the SSS (Security Sub-System). This is a hardware cryptographic accelerator that has been part of Exynos SoCs since the S5PC110 (Later renamed Exynos 3110) and is able to accelerate encryption and decryption for various ciphers. This includes a DMA engine so that it could directly have disk access for fast full disk encryption. I wasn’t able to confirm if the 7420 physically still has this block as it lacked any drivers. It may be possible Samsung has dropped it in favor of using the cryptographic capabilities of ARMv8, but it would still make sense to maintain a fixed-function IP block for power efficiency.

CCI-400 example layout as published by ARM and used in LG's Odin/Nuclun SoC

Exynos line of SoCs do not follow this arrangement.

As mentioned earlier, the one of the CCI ports is shared between the G2D block and the GPU. This is also a large difference to how ARM advertises its example SoC configuration of the CCI: we mostly see Mali GPUs get two ports on the CCI. This makes sense as each port is 128 bit wide in both read and write directions. Vendors have up until now been clocking the CCI at around half the DRAM frequency as most LPDDR3 SoCs saw it running at 400-466MHz. One example SoC which closely follows ARM’s depiction of such a bus architecture is LG’s Odin (Nuclun), as it runs two ports to the CCI running at 400MHz with 800MHz memory controllers. Having a single 128 bit port to the GPU will limit its bandwidth to only half the achievable bandwidth of 2x32bit memory controllers, so that’d be a waste of resources. Furthermore, the Exynos 7420 clocks the CCI at up to only 532MHz. This is an interesting divergence from the DRAM frequency / 2 rule we’ve seen until now, and also means that a single CPU cluster is technically unable to saturate main memory bandwidth on the 7420. The per-port bandwidth is limited to 8.5GB/s in read and write directions for a concurrent total of 17GB/s, a figure we’ll be able to correctly verify later on in the CPU performance section.

The last CCI port which I didn’t actually depict in the layout should go to the CoreSight block, ARM’s system IP for debugging and trace of SoCs.

This leaves the question open on how the GPU is actually connected to the memory controllers. One thing for sure, is that it doesn’t go through the CCI. Samsung calls their internal bus architecture a “Multi-Layer AXI/AHB Bus Architecture”. AXI and AHB are both specifications defined in ARM AMBA (Advanced Microcontroller Bus Architecture), an interconnect standard used in one way or another basically all of today’s SoCs. We know that there is at least a large 2-layer separation: An “Internal” bus which I depict in the SoC schematic as BUS0, a “Memory Interface” bus depicted as BUS1. There is also a less important peripheral bus that I left out due to it connecting smaller low-bandwidth IPs that are not as interesting to the discussion.

The memory interface bus operates on the same clock plane as the actual LPDDR4 memory controllers, reaching up to 1555MHz. The memory controllers physically are spread along two sides of the SoC die. Each memory controller has 2x 16bit interfaces directly to the DRAM dies. This means that the DRAM PoP module contains 4 DRAM dies, standard among 64-bit total bus width SoCs. The most trouble I’ve had in deciphering how the internal bus layout works was trying to understanding how the memory controllers interact with the various buses. It’s clear that Samsung’s bus architecture is quite more complex to the more simplistic designs we have public information about. Sadly, unless there will be future new resources we can fall upon on, the best we can do is to just have a guess on how main traffic flows throughout the chip.

Part of the internal bus blocks are all major I/O IP blocks. These are physically separated into two major blocks called FSYS0 and FSYS1. This includes 3 Synopsis DesignWare MMC controllers (2x 8bit, 1x 4bit) that can be used for eMMC, WiFi SDIO and external SD card connections. A MIPI UniPro controller for UFS 2.0 is of course also part of the interfaces for storage and is used for the NAND storage on the Galaxy S6.

Over the last year we’ve seen WiFi connectivity make a migration from SDIO interconnects to PCIe-based ones. Both Qualcomm and Samsung also did this migration for their top-end SoCs as they included PCIe controllers. The Galaxy Note 4 was one of the first phones to make this switch with Broadcom’s BCM4358 WiFi SoC. According to Broadcom, the reasons for this are two-fold. The first is for performance, as PCIe has significantly reduced processing overhead and DMA capability. The second is power efficiency, as the PCIe spec allows for lower and more fine-grained low power states than the SDIO interface.

114 Comments

View All Comments

Andrei Frumusanu - Monday, June 29, 2015 - link

Frankly, I don't know. I tried to ask Samsung a similar question but they refused to comment on customer relations. Meizu so far seems to be the only major vendor consistently using Exynos parts but as to why we haven't seen other vendors adopt them can be attributed to anything going from pricing to volume availability. Only the companies themselves know the details of these contracts.gnx - Monday, June 29, 2015 - link

Thanks! The SoC market is really strange.id4andrei - Monday, June 29, 2015 - link

This is Samsung's chance to eat Qualcomm's lunch. Close down node manufacturing for others(including Apple) and be like Intel. Either use Exynos or be satisfied with inferior nodes from other fabs.CiccioB - Monday, June 29, 2015 - link

And that meas start competing with PP only, like Intel did.That is, if you force others to go to other foundries, you have to be sure you have the best one, or in case TMSC comes up with a better PP (like a 16+nm revision) you have just thrown all your customers to your fab competitors, making double damage (or total one). Or just think if Intel tomorrow suddenly opens to ARM customers in order to saturate it's now rusting 14nm machineries. Samsung would be in great trouble after that eventual (and IMHO stupid) move.

Investing in PP i really expensive and there are other foundries capable of doing so. Samsung can't be sure to always be the best one on the market. And invest tons of billions of dollar every year to make sure to be the number one (for SoC of course).

ZeDestructor - Wednesday, July 1, 2015 - link

Samsung is part of a common platform alliance/agreement with GloFo, so while they could lock down and close others out, GloFo would not, so there's little commercial benefit from doing so.They could of course coerce GloFo into doing the same, but that lands them into hot water with regulatory watchdogs like the FTC regarding anti-competitive practices and collusion, which while Samsung wouldn't really mind (no, really), GloFo would.

eh_ch - Monday, June 29, 2015 - link

How will it take for Samsung's process to trickle down to AMD via GloFo? Could it bridge the efficiency gap to nvidia / Intel? Holding out hope that ATI/MD will be competitive once more.eh_ch - Monday, June 29, 2015 - link

How long will it take, that isAdding-Color - Monday, June 29, 2015 - link

No, AMD won't have a technology advantage to Nvidia on next gen GPUs, currently it looks like nvidia will choose Samsung for their next node, and as Samsung and GloFo jav some kind of alliance and share processes (glofo licenses some Samsung processes AFAIK, the technology should be very similar for both, yet AMD should have a small HBM advantage, they have better relations to hynix (and helped to develop HBM) than nvidia.jjj - Monday, June 29, 2015 - link

There won't be a HBM advantage from a technological point of view, at best AMD could get slightly better pricing but even that is unlikely since Nvidia has much higher volume. The first gen HBM was late and both Nvidia and AMD had plenty of time to prepare for it.As for the process, we don't really know what foundry each will use and what version of the process.On the GPU side both are more likely to go TSMC or use both. On the CPU side AMD will likely go GloFo but not this early version of the process and Intel might go 10nm not long after AMD has 14nm. On 10nm TSMC and Samsung do seem to be catching up with Intel but doubt AMD will have 10nm early.

fluxtatic - Tuesday, June 30, 2015 - link

Hell, at this point I'd be happy to see AMD at < 28nm