The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTChromium Compile: Windows VC++ Compile of Chrome

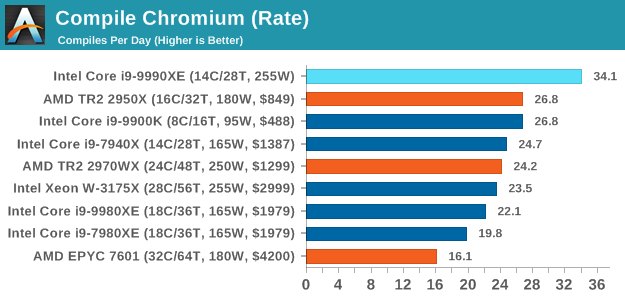

A large number of AnandTech readers are software engineers, looking at how the hardware they use performs. While compiling a Linux kernel is ‘standard’ for the reviewers who often compile, our test is a little more varied – we are using the windows instructions to compile Chrome, specifically a Chrome 56 build from March 2017, as that was when we built the test. Google quite handily gives instructions on how to compile with Windows, along with a 400k file download for the repo. This is by far one of our most popular benchmarks, and is a good measure of core performance, multithreading performance, and also memory accesses.

In our test, using Google’s instructions, we use the MSVC compiler and ninja developer tools to manage the compile. As you may expect, the benchmark is variably threaded, with a mix of DRAM requirements that benefit from faster caches. Data procured in our test is the time taken for the compile, which we convert into compiles per day. The benchmark takes anywhere from an hour on a fast single high-end desktop processor to several hours on the slowest offerings.

Prior to this test, the two CPUs battling it out for supremacy were the 16-core Ryzen Threadripper 2950X, and the 8-core i9-9900K. By adding six more cores, a lot more frequency, and two more memory channels, the Core i9-9990XE plows through this test very easily, perfoming the compile in 42 minutes and 10 seconds, and is the only processor to broach the 50 minute mark, let alone the 45 minute mark.

145 Comments

View All Comments

Supercell99 - Monday, October 28, 2019 - link

The democrats have banned LN2 in New York as they have deemed it a climate pollutant.xrror - Monday, October 28, 2019 - link

No they haven't you republican jackass, the Earth's atmosphere is 78% nitrogen.eek2121 - Monday, October 28, 2019 - link

Because after a while, the system breaks down under LN2 cooling. There is such a thing as silicon being too cold, you know. Google intel cold bug, for example.ravyne - Monday, October 28, 2019 - link

Have you seen LN2 cooling? It's not really practical for prolonged use -- you have to keep the LN2 flowing, you have to vent the gasses of the expended LN2, you have to resupply the LN2 somehow.But you're missing the most important constraint of all for high-frequency trading, which is the reason they're building this processor into just 1 rack unit -- these machines aren't running on some remote data center, they're running in a network closet or very small data center probably just a floor or two away from a major stock exchange, in the same building. There is only so much space to be had. The space that's available is generally auctioned and can run well into 5-figures per month for a single rack unit. That's why they're building the exotic 1U liquid cooling in the first place, it'd be much easier to cool in even 2 units (there's even off-the-shelf radiators, then).

edzieba - Thursday, October 31, 2019 - link

These machines are installed in exchange-owned and managed datacentres. "No LN2" as a rule would scupper that concept from the start, but even if it were allowed then you still have the problem of daily shipments of LN2 into a metropolitan centre, failover if a delivery is missed, dealing with large volumes of N2 gas generated in a city centre, etc. Just a logistical nightmare in general.eek2121 - Monday, October 28, 2019 - link

It's impossible to cool a system 24/7 with LN2.DixonSoftwareSolutions - Tuesday, October 29, 2019 - link

I think you probably could do something like that. You would want to run it on a beta system in parallel with your production system for a long time to make sure you had the 99.999999% uptime required. You would have to get pretty down and dirty to make it a 24/7 system. Probably a closed loop LN2 system, and I don't even know what kind of machine is required to condense from gas to liquid. You would also probably want heaters on the other components of the motherboard so that only the die was kept at the target low temp, and other components at the correct operating temp. And you would probably have to submerge the entire thing in some dielectric fluid like mineral oil to prevent condensation from building up. It would be expensive no doubt, but if (m/b)illions are on the line, then why not? Also, before embarking on something like this, you would want to make certain that you had tweaked every last bit of your software, both third party software settings and internally authored code, to minimize latency.willis936 - Monday, October 28, 2019 - link

Judging from your description I would argue that a traditional PC is a horrible choice for such a problem, given the money at stake. They should be spinning custom ASICs that have the network stack and logic all put together. Even going through a NIC across a PCIe bus and into main memory and back out again is burning thousands of nanoseconds.29a - Monday, October 28, 2019 - link

I'm also wondering why they don't create custom silicon for this.gsvelto - Monday, October 28, 2019 - link

They do, not all HFT trading houses use software running on COTS hardware. Depending on where you go you can find FPGAs and even ASICs. However, not all of them have the expertise to move to hardware solutions; many are tied to their internal sofware and as such they will invest in the fastest COTS hardware money can buy.