The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTChromium Compile: Windows VC++ Compile of Chrome

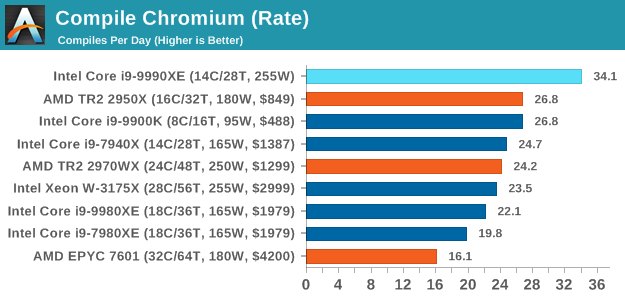

A large number of AnandTech readers are software engineers, looking at how the hardware they use performs. While compiling a Linux kernel is ‘standard’ for the reviewers who often compile, our test is a little more varied – we are using the windows instructions to compile Chrome, specifically a Chrome 56 build from March 2017, as that was when we built the test. Google quite handily gives instructions on how to compile with Windows, along with a 400k file download for the repo. This is by far one of our most popular benchmarks, and is a good measure of core performance, multithreading performance, and also memory accesses.

In our test, using Google’s instructions, we use the MSVC compiler and ninja developer tools to manage the compile. As you may expect, the benchmark is variably threaded, with a mix of DRAM requirements that benefit from faster caches. Data procured in our test is the time taken for the compile, which we convert into compiles per day. The benchmark takes anywhere from an hour on a fast single high-end desktop processor to several hours on the slowest offerings.

Prior to this test, the two CPUs battling it out for supremacy were the 16-core Ryzen Threadripper 2950X, and the 8-core i9-9900K. By adding six more cores, a lot more frequency, and two more memory channels, the Core i9-9990XE plows through this test very easily, perfoming the compile in 42 minutes and 10 seconds, and is the only processor to broach the 50 minute mark, let alone the 45 minute mark.

145 Comments

View All Comments

Sivar - Monday, October 28, 2019 - link

Why such an angry statement?14 is a very respectable number of cores. 14 at 5GHz is a world exclusive.

I wouldn't even call this a product -- more of a hand-picked specialty part auction, which is perfectly reasonable (if uncommon) for any manufacturer to do. The fact that the parts sold indicates the demand is there. Why ignore the demand?

Spunjji - Wednesday, October 30, 2019 - link

The fact that they sold very few of them indicates that the demand is barely there.FunBunny2 - Monday, October 28, 2019 - link

"Stories of companies spending 10s of millions to implement line-of-sight microwave transmitter towers to shave off 3 milliseconds from the latency time is a story I once heard. "There was reporting, mainstream source (Lewis: https://www.telegraph.co.uk/finance/newsbysector/b... that a broker(s) installed a fiber line from the Chicago office to an exchange in NJ.

“It needed its burrow to be straight, maybe the most insistently straight path ever dug into the earth. It needed to connect a data centre on the South Side of Chicago to a stock exchange in northern New Jersey. Above all, apparently, it had to be secret," Mr Lewis said.

bji - Monday, October 28, 2019 - link

I call BS on that story. Why would you spend hundreds of millions (it must have cost at least that right?) to dig a straight 800+ mile tunnel between Chicago and NYC to get a 13 ms latency just so you could be destroyed by offices in NYC with 5 ms latency. Makes no sense. Your only choice is to move physically close to the source, if lowest latency is the winner then that's the only way to get it and be competitive.Authors happily embellish existing stories, misrepresent details, and just plain old make sh** up to sell books. And then news outlets happily garbage-in, garbage-out these stories to get hits. I'm pretty sure that's what happened with that "story".

eek2121 - Monday, October 28, 2019 - link

Companies have done it. Hell years ago I INTERVIEWED with a company that did it. It would blow your mind to find out what the financial folks will do to accelerate trading. A large portion of stock market trades are automated and driven by machine learning or predictive algorithms. How do I know, that position I interviewed for years ago (2003) was for a software developer for such an algorithm. I didn't get the job, because I didn't have the skills they were looking for at the time, but we did have a very interesting conversation about how their platform worked. It's fascinating how finance pushes everything forward.FunBunny2 - Monday, October 28, 2019 - link

" It would blow your mind to find out what the financial folks will do to accelerate trading."yes, yes it would - here: https://www.marketplace.org/2019/10/07/fight-nyse-...

bji - Monday, October 28, 2019 - link

Yes, I believe that those companies probably often spend lots of money to buy competitive advantages. I am simply stating that they'd not be buying a competitive advantage here (since the real competition is based in NYC had has an insurmountable advantage - the laws of physics not letting signals travel between Chicago and Wall St. faster than 13 ms) so they wouldn't spend the money. They would spend money buying an actual competitive advantage, i.e. offices in NYC.mode_13h - Tuesday, October 29, 2019 - link

> Why would you spend hundreds of millions (it must have cost at least that right?) to dig a straight 800+ mile tunnel between Chicago and NYC to get a 13 ms latency just so you could be destroyed by offices in NYC with 5 ms latency. Makes no sense. Your only choice is to move physically close to the source, if lowest latency is the winner then that's the only way to get it and be competitive.When something doesn't seem to make sense, maybe the error is in your understanding of the situation. Did you ever consider that there are financial markets outside of NYC, and that some people might be trading between markets, or using signals from one market to inform trades in others?

Joel Busch - Tuesday, October 29, 2019 - link

This one is easy to answer, because there are two stock exchanges in play. NYSE in New York and CHX in Chicago. If you can send information from one exchange to the other quicker than others then you have an opportunity for arbitrage.One of my professors is Ankit Singla, he works on c-speed networking, he cited this paper in class https://doi.org/10.1111/fire.12036

They say for example:

"Our analysis of the market data confirms that as of April 2010, the fastest communication route connecting the Chicago futures markets to the New Jersey equity markets was through fiber optic lines that allowed equity prices to respond within 7.25–7.95 ms of a price change in Chicago (Adler, 2012). In Au-gust of 2010, Spread Networks introduced a new fiber optic line that was shorter than the pre-existing routes and used lower latency equipment. This technology reduced Chicago–New Jersey latency to approximately 6.65 ms (Steiner, 2010; Adler,2012)."

I don't have the time to read the whole paper right now, I'll just trust my professor here. If there is actually something wrong with their methodology then I think the world would like to hear it.

rahvin - Monday, October 28, 2019 - link

<<“It needed its burrow to be straight, maybe the most insistently straight path ever dug into the earth. It needed to connect a data centre on the South Side of Chicago to a stock exchange in northern New Jersey. Above all, apparently, it had to be secret," Mr Lewis said>>That's just a bunch of hogwash. You couldn't dig a straight line from Chicago to Jersey. It's just fancy sounding hogwash meant to convince those without the logic or background to see it for the hogwash it is. It's no more true than grimm's fairy tales.