Intel 12th Gen Core Alder Lake for Desktops: Top SKUs Only, Coming November 4th

by Dr. Ian Cutress on October 27, 2021 12:00 PM EST- Posted in

- CPUs

- Intel

- DDR4

- DDR5

- PCIe 5.0

- Alder Lake

- Intel 7

- 12th Gen Core

- Z690

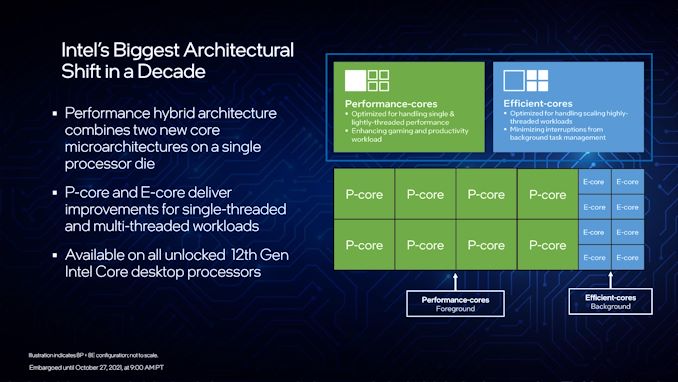

A Hybrid/Heterogeneous Design

Developing a processor with two different types of core is not a new concept – there are billions of smartphones that have exactly that inside them, running Android or iOS, as well as IoT and embedded systems. We’ve also seen it on Windows, cropping up on Qualcomm’s Windows on Snapdragon mobile notebooks, as well as Intel’s previous Lakefield design. Lakefield was the first x86 hybrid design in that context, and Alder Lake is the more mass-market realization of that plan.

A processor with two different types of core disrupts the typical view of how we might assume a computer works. At the basic level, it has been taught that a modern machine is consistent – every CPU has the same performance, processes the same data at the same rate, has the same latency to memory, the same latency to each other, and everything is equal. This is a straightforward homogenous design that’s very easy to write software for.

Once we start considering that not every core has the same latency to memory, moving up to a situation where there are different aspects of a chip that do different things at different speeds and efficiencies, now we move into a heterogeneous design scenario. In this instance, it becomes more complex to understand what resources are available, and how to use them in the best light. Obviously, it makes sense to make it all transparent to the user.

With Intel’s Alder Lake, we have two types of cores: high performance/P-cores, built on the Golden Cove microarchitecture, and high efficiency/E-cores, built on the Gracemont microarchitecture. Each of these cores are designed for different optimization points – P-cores have a super-wide performance window and go for peak performance, while E-cores focus on saving power at half the frequency, or lower, where the P-core might be inefficient.

This means that if there is a background task waiting on data, or something that isn’t latency-sensitive, it can work on the E-cores in the background and save power. When a user needs speed and power, the system can load up the P-cores with work so it can finish the fastest. Alternatively, if a workload is more throughput sensitive than latency-sensitive, it can be split across both P-cores and E-cores for peak throughput.

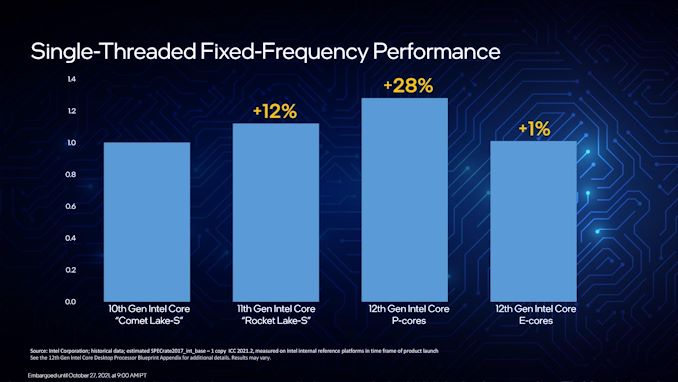

For performance, Intel lists a single P-core as ~19% better than a core in Rocket Lake 11th Gen, while a single E-core can offer better performance than a Comet Lake 10th Gen core. Efficiency is similarly aimed to be competitive, with Intel saying a Core i9-12900K with all 16C/24T running at a fixed 65 W will equal its previous generation Core i9-11900K 8C/16T flagship at 250 W. A lot of that will be that having more cores at a lower frequency is more efficient than a few cores at peak frequency (as we see in GPUs), however an effective 4x performance per watt improvement requires deeper investigation in our review.

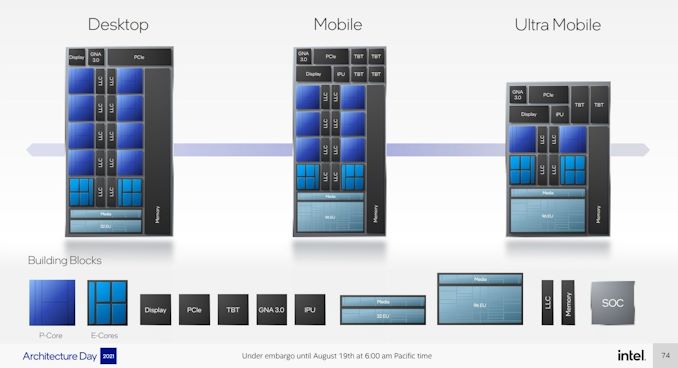

As a result, the P-cores and E-cores look very different. A deeper explanation can be found in our Alder Lake microarchitecture deep dive, but the E-cores end up being much smaller, such that four of them are roughly in the same area as a single P-core. This creates an interesting dynamic, as Intel highlighted back at its Architecture Day: A single P-core provides the best latency-sensitive performance, but a group of E-cores would beat a P-core in performance per watt, arguably at the same performance level.

However, one big question in all of this is how these workloads end up on the right cores in the first place? Enter Thread Director (more on the next page).

A Word on L1, L2, and L3 Cache

Users with an astute eye will notice that Intel’s diagrams relating to core counts and cache amounts are representations, and some of the numbers on a deeper inspection need some explanation.

For the cores, the processor design is physically split into 10 segments.

A segment contains either a P-core or a set of four E-cores, due to their relative size and functionality. Each P-core has 1.25 MiB of private L2 cache, which a group of four E-cores has 2 MiB of shared L2 cache.

This is backed by a large shared L3 cache, totaling 30 MiB. Intel’s diagram shows that there are 10 LLC segments which should mean 3.0 MiB each, right? However, moving from Core i9 to Core i7, we only lose one segment (one group of four E-cores), so how come 5.0 MiB is lost from the total L3? Looking at the processor tables makes less sense.

Please note that the following is conjecture; we're awaiting confirmation from Intel that this is indeed the case.

It’s because there are more than 10 LLC slices – there’s actually 12 of them, and they’re each 2.5 MiB. It’s likely that either each group of E-cores has two slices each, or there are extra ring stops for more cache.

Each of the P-cores has a 2.5 MiB slice of L3 cache, with eight cores making 20 MiB of the total. This leaves 10 MiB between two groups of four E-cores, suggesting that either each group has 5.0 MiB of L3 cache split into two 2.5 MiB slices, or there are two extra LLC slices on Intel’s interconnect.

| Alder Lake Cache | |||||||

| AnandTech | Cores P+E/T |

L2 Cache |

L3 Cache |

IGP | Base W |

Turbo W |

Price $1ku |

| i9-12900K | 8+8/24 | 8x1.25 2x2.00 |

30 | 770 | 125 | 241 | $589 |

| i9-12900KF | 8+8/24 | 8x1.25 2x2.00 |

30 | - | 125 | 241 | $564 |

| i7-12700K | 8+4/20 | 8x1.25 1x2.00 |

25 | 770 | 125 | 190 | $409 |

| i7-12700KF | 8+4/20 | 8x1.25 1x2.00 |

25 | - | 125 | 190 | $384 |

| i5-12600K | 6+4/20 | 6x1.25 1x2.00 |

20 | 770 | 125 | 150 | $289 |

| i5-12600KF | 6+4/20 | 6.125 1x200 |

20 | - | 125 | 150 | $264 |

This is important because moving from Core i9 to Core i7, we lose 4xE-cores, but also lose 5.0 MiB of L3 cache, making 25 MiB as listed in the table. Then from Core i7 to Core i5, two P-cores are lost, totaling another 5.0 MiB of L3 cache, going down to 20 MiB. So while Intel’s diagram shows 10 distinct core/LLC segments, there are actually 12. I suspect that if both sets of E-cores are disabled, so we end up with a processor with eight P-cores, 20 MiB of L3 cache will be shown.

395 Comments

View All Comments

romrunning - Wednesday, October 27, 2021 - link

I think the universal recommendation will be to use the "High Performance" power plan on all desktops. Then you don't have to worry about the threads being shifted onto E-cores if you really needed it on a P-core.PEJUman - Wednesday, October 27, 2021 - link

I agree this is easy, but that's not the point.What I am saying is how much longer will you tolerate this kind of quality? why should I fiddle with power profiles to patch a broken/nonQA'd scheduler. Microsoft does not pay me for beta testing their scheduler, they also failed to pay me to beta testing their thunderbolt 3 and 4 implementations. And to make this worse, This is a product that MS actually sells and tries to make money from.

I do not have to do any of these with on the macbook Air. And the macOS is freaking free, it's licensed to their hardware set.

FYI here is what I currently running, just in case you're wondering:

Desktop: 5950x + 3090 @ 8K on HDMI 2.1 Homebrew

Laptops: Dell Inspiron 1165g7, XPS 1065g7, HP Zbook workstation with i7 6th gen. All of these crashes repeatedly with TB3 and TB4 docks from Dell/HP/OWC. And guess what, the fix is not to let the laptop sleep (sounds familiar?).

Apple: phones, ipads, macbook air with 8th gen i5 + TB3 dock.

These apple products have much higher uptime, almost 20x better than the MS products above. My desktop is by far the most stable, but still a long shot away from the mac. Looking at this article, I expect W11 with Adler lake laptops to go even worse. Intel, AMD and MS need much tighter integration and QA to compete with M1s from Apple. Microsoft, Intel and AMD, if you are reading this. Next year, I am betting that my money will be spent towards a M2 & MacOS powered laptop. Please prove me wrong.

Robberbaron12 - Wednesday, October 27, 2021 - link

The Thunderbolt 3 and 4 Implementation on Windows 10 has been one giant Charlie Foxtrot. We have had endless issues with Dell and HP laptops and desktops with terrible TB software and drivers crashing continuously. M$ and the OEMs blame each other and nothing improves (I'm actually pretty sure its Intels firmware) but Apple can make it work so ????Spunjji - Thursday, October 28, 2021 - link

Intel and MS are the two consistent factors on the PC side. Could be MS, could just be Intel writing lousy drivers. Hard to say.Dug - Wednesday, October 27, 2021 - link

The Macbook Air M1 release was a clusterf. Memory management was hosed creating GB's of writes a day to ssd. TB docks did not work and caused kernel panic. External monitor support was non existent, meaning you couldn't control resolution or refresh rate on most popular monitors. I know because I lived through the beta testing and release. So don't go thinking Mac is all grandiose all the time.PEJUman - Wednesday, October 27, 2021 - link

Is this still a problem today? Will it be fixed by the time M1max/pro hits meaningful quantities in the wild?Spunjji - Thursday, October 28, 2021 - link

Fixed, AFAIKOxford Guy - Thursday, October 28, 2021 - link

The shattering screen hasn't been fixed.name99 - Thursday, October 28, 2021 - link

"Memory management was hosed creating GB's of writes a day to ssd."And yet the only people who ever cared about this were people who insisted it meant early death of the SSDs and were looking for something to be wrong with the machines.

I am unaware of a single case where this had any real-world effect, and as far as we know, it may well have been bugs in the SW that was reporting these numbers.

"I know because I lived through the beta testing and release."

What do you expect from beta testing?

If you'd stuck to "I know because I lived through the initial release", and dropped the stupid "OMG my SSD will be dead in three months" hysteria, you'd be a lot more convincing. As it is, you come across as the sort of person who insists on finding things to complain about, and if you can't find something reasonable, you'll find something unreasonable.

Oxford Guy - Thursday, October 28, 2021 - link

Apple has yet to fix the CD player bug I reported back in 2001.The original Mac OS (last released as OS 9) played audio discs at 1x. OS X has always spun the discs at the maximum read speed of the drive, which is utter incompetence.

I just checked and Catalina is still doing it.

I reported the bug via Apple's OS X report page at least twice, probably four times — over the years. That a $1 trillion company can't manage to get audio CDs to play at the correct speed in decades is beyond appalling.