Intel Unveils Meteor Lake Architecture: Intel 4 Heralds the Disaggregated Future of Mobile CPUs

by Gavin Bonshor on September 19, 2023 11:35 AM ESTCompute Tile: New P (Redwood Cove) and E-cores (Crestmont)

The compute tile is the first client-focused tile to be built on the Intel 4 process technology. It houses the latest-generation P-cores and E-cores, both of which are based on newer and updated architectures. The P-Cores are officially called Redwood Cove, while the E-Cores are Crestmont. Intel also claims that power efficiency is greatly improved from previous generations, combined with its 3D Foveros packaging and offloading less performance-critical elements such as the SoC, media, and graphics onto other tiles. Intel also uses the same ring fabric to interconnect all the tiles to reduce power and latency penalties across the entirety of the chip.

One thing to note with the new core architectures, including Redwood Cove (P-core) and Crestmont (E-core), is that Intel was very light on disclosing many of the finer details. While we got the general blurb of 'it's better than this and has better IPC performance than the last gen,' Intel has omitted details such as L3 cache, whether there's L4 cache through Intel's "Adamintine" hierarchy, and disclosing decoder widths within the cores. As such, Intel hasn't provided enough details for us to do a full architectural deep dive of Redwood Cove or Crestmot, but more an easy look to see what's new and how it's implemented.

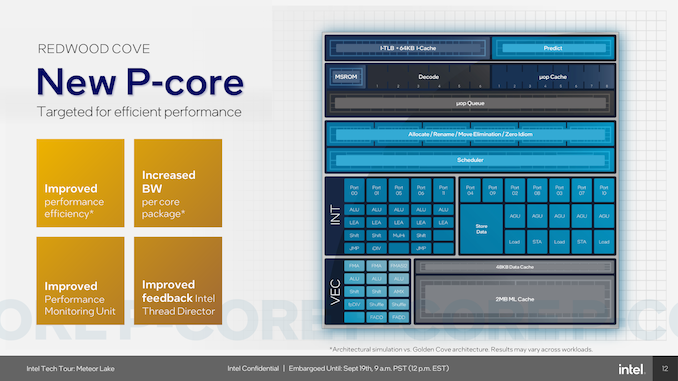

Looking at some of the new changes to Meteor Lake, one of the most notable is the introduction of the new Redwood Cove P-core. This new P-core is the direct successor to the previous Golden Cove core found in the 12th Gen Core (Raptor Lake) processors and is designed to bring generational improvements. As expected, Meteor Lake brings generational IPC gains through the new Redwood Cove cores. The Redwood Cove core also has increased bandwidth for both cache and memory. The performance monitoring unit has also been updated to enhance monitoring, and one of the standout features of the new P-Core is the enhanced feedback provided by Intel's Thread Director, which aids in optimizing core performance and directing workloads to the right cores.

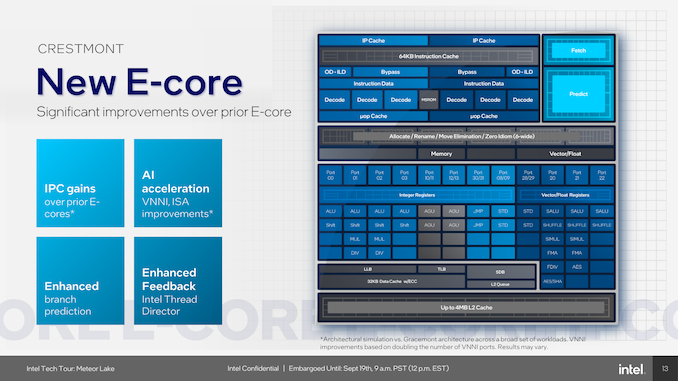

Another inclusion is the new Cresmont-based E-cores, which also benefit from generational IPC gains, and they keep the CPU-based AI acceleration through Vector Neural Network Instructions (VNNI) as seen on Raptor Lake (13th Gen) and Alder Lake (12th Gen). Intel claims improvements over the previous gens, although they haven't provided anything to substantiate this.

However, Intel states what it means by improvements: "Architectural simulation vs. Gracemont architecture across a broad set of workloads. VNNI improvements based on doubling the number of VNNI ports. Results may vary." This is a very roundabout way of saying we've doubled the number of AVX2 VNNI ports, but they haven't given us any figures, and with Raptor Lake, not all SKUs had support for the VNNI instruction set. They haven't told us whether or not this is now a feature of the Crestmont E-core itself or if it's, again, SKU-dependent.

This is designed to bolster the user experience when using AI applications and running AI-based workloads, although the NPU on the SoC tile is predominately more suited for these. Like the P-Cores, the E-Cores also benefit from enhanced Thread Director feedback, which provides better granular control and optimization. Workloads that aren't as intensive can be offloaded onto the new Low Power Island E-cores, which are embedded into the SoC tile.

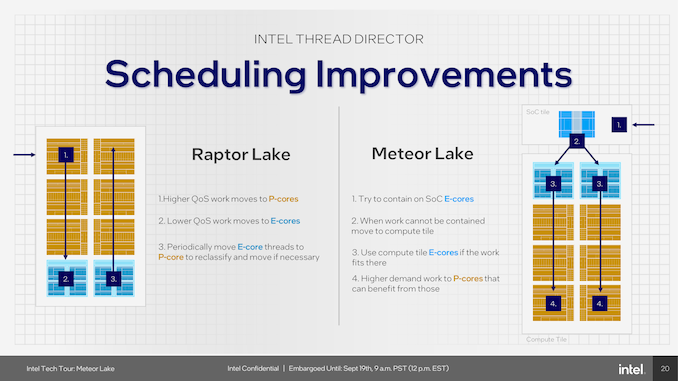

One area where Intel is promising major improvements and optimizations is through Thread Director. The Alder Lake (12th Gen) and Raptor Lake (13th Gen) architecture introduced a nuanced approach to scheduling. Under Alder/Raptor work was assigned a quality-of-service (QoS) ranking, and higher priority work was allocated to P-cores for more demanding and intensive workloads, while lower-ranked workloads are directed to E-cores, primarily to save power.

In cooperation with Microsoft Windows, Intel is bringing new enhancements and refinements into play for Meteor Lake. Meteor Lake's SoC tile LP E-cores represent a third tier of service, and Thread Director will try to keep work there first. Then, if threads need faster performance, they can be moved to the compute tile, accessing the faster, full-power E-cores, and at the top, the P-cores. This gives the chip better overall workload distribution in terms of power efficiency. Moreover, Meteor Lake's strategy to periodically move highly demanding tasks to the P-cores that can benefit from the higher performance levels offers a dynamic approach to thread scheduling.

Overall, this is designed to improve power efficiency through Meteor Lake, giving it more versatility over Raptor Lake regarding task scheduling. The flip side is that, on paper, Meteor Lake is a more efficient platform through these enhancements than Raptor Lake, especially in scenarios requiring rapid adjustments to fluctuating workloads and through those lighter workloads that can be offloaded onto the LP E-cores within the SoC.

Compute Tile: Intel 4 with EUV Lithography

The entirety of the compute tile, including the P and E-cores, is built using the Intel 4 node and is also Intel's first client chip to use EUV lithography. Intel 4 is a key part of Intel's IDM 2.0 strategy, which aims to achieve parity by 2024 and process leadership by 2025. We have already written a piece detailing the Intel 4 node in great detail, which can be found below:

Intel 4 Process Node In Detail: 2x Density Scaling, 20% Improved Performance

Intel 4 uses extreme ultraviolet (EUV) lithography, a highly efficient manufacturing technique that simplifies manufacturing, improving yield and area scaling. Not only is EUV, along with Intel 4, which is designed to scale out for better power efficiency, but it's also the precursor for Intel to switch things over to their Intel 3 process, which is still being developed.

According to Intel's '5 nodes in 4 years' goal within the roadmap, Intel 3 is stated to be manufacturing-ready in H2 of 2023. What's interesting about the cadence of Intel 3 in the roadmap is that Intel 3 is design-compatible with Intel 4, and as such, Intel 3 is designed to be the long-lived node with EUV lithography.

One of the primary benefits of Intel 4 is its area scaling capabilities. The Intel 4 process offers 2X the area scaling for high-performance logic libraries compared with the previous Intel 7 process node – a process which wasn't only troublesome through its exceedingly long development cycle, but yields were not the greatest. Having the ability to scale out in such a way is vital for fitting more and more transistors on a chip, which should theoretically improve the overall performance and efficiency of the silicon. Intel 4 is also optimized for high-performance computing applications and supports both low-voltage (<0.65V) and high-voltage (>1.1V) operations. Intel claims that having this flexibility results in more than 20% performance in iso-power performance over Intel 7, and the technology also incorporates high-density Metal-Insulator-Metal (MIM) capacitors, which Intel claims make power delivery to the chip superior.

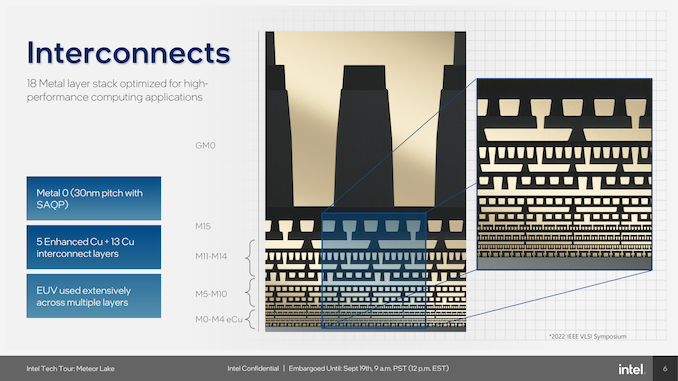

Through Intel 4 with EUV, Intel uses a 30 mm fin pitch with self-aligned quad patterning (SAQP) and a 50 nm tungsten gate pitch, scaled down by 0.83x from 54/60 nm on Intel 7. The M0 pitch is also down by 0.75x to 30 nm from 40 nm, and the HP library height has been reduced greatly from 408 in Intel 7 to 240 nm on Intel 4, a scaling of 0.59x. Moving from a 4 fin to 3 fin allocation means that Meteor Lake on Intel 4 has a tighter gate spacing than Intel 7.

One key new introduction to Intel 4 is the materials used, with Intel using what it calls 'Enhanced Copper'. Although Intel hasn't disclosed the specific percentage of the mixture, Enhanced Copper is essentially copper (Cu) adorned with cobalt (Co) and is designed to eliminate high resistance and high volume barriers. The combined metallurgy of copper and cobalt is used on layers M0 to M4, while layers M5 to M15 are made from copper using different pitches ranging from 50 nm up to 280 nm.

| Comparing Intel 4 to Intel 7 | |||

| Intel 4 | Intel 7 | Change | |

| Fin Pitch | 30 nm | 34 nm | 0.88 x |

| Contact Gate Poly Pitch | 50 nm | 54/60 nm | 0.83 x |

| Minimum Metal Pitch (M0) | 30 nm | 40 nm | 0.75 x |

| HP Library Height | 240h | 408h | 0.59 x |

| Area (Library Height x CPP) | 12K nm2 | 24.4K nm2 | 0.49 x |

Using Extreme Ultraviolet (EUV) lithography on Intel 4 represents a major progressive advancement in semiconductor fabrication. Accomplished by using x-rays with a wavelength of around 13.5 nanometers (generated by zapping tin with a laser, no less), EUV lithography significantly improves and optimizes the photolithographic process, allowing for enhanced resolution and pattern fidelity metrology. The technology requires specialized equipment, including high-precision optics and vacuum chambers, with a single EUV lithographic system costing around $150 million (as per Reuters).

When it comes to using manufacturing chips, there are different levels of patterning, both single and multi-patterning. Using EUV allows Intel to reduce the number of masks and steps in the fabrication process, with up to 20% fewer masks on Intel 4 than Intel 7 by replacing multi-patterning steps a single EUV layer. While each patterning level presents its own unique challenges, EUV allows for a single pattern to use just one exposure to etch out. This means that production can be increased and flow faster throughout the process. Opting for multi-patterning means more cost and higher variability. Another advantage of using a single-pattern EUV process also reduces the number of defects within the silicon.

Despite the substantial capital and operational expenditures, the technology offers compelling advantages, such as a reduction in mask count by 20% and a decrease in process steps by 5% for Intel 4. These efficiencies contribute to superior area scaling and yield optimization and put EUV lithography as a cornerstone in Intel's processor roadmap as they try to achieve leadership. It also synergizes with Advanced Packaging Technologies (APT) like Embedded Multi-die Interconnect Bridge (EMIB) and is combined with Foveros 3D packaging, further ensuring its role as a progressive technology in semiconductor and chip manufacturing.

107 Comments

View All Comments

Composite - Thursday, September 28, 2023 - link

totally agree. At the same time, instead of doing a full Intel 4 Meteor lake chip, shrink it down to compute tile only also reduces the size of the silicon and improves yield. Later next year, Intel will also need EUV capacity for Sierra Forest and Granite Rapids. These chips will be much larger than mobile compute tile and considerably lower yield.... Intel will need every ounce of EUV capacity they have.tipoo - Tuesday, September 19, 2023 - link

Probably to have as much compute on the N4 capacity that they have, their substrate also takes much less power connecting them than current AMD and it allows for the best node for each part being used i.e if Intel's wasn't ideal for the GPU tile as the CPU tile etcComposite - Thursday, September 28, 2023 - link

I have the same question. At the same time, I was curious about Intel's EUV capacity. Since Intel is the late comer to EUV and over 50% of EUV machines are at TSMC, does Intel really have the capacity to manufacture full chip Intel 4 Meteor Lake? Not to mention up coming Sierra Forest and later on Granite Rapids will all use EUV capacity. I think the reasonable way is indeed only use EUV at the most critical part of Meteor Lake ---> Compute tile, and out source the rest.eSyr - Tuesday, September 19, 2023 - link

To avoid the issues they have with rollout 14 nm (BDW) and then 10 nm (CNL), I guess, when they held back by yield with respect to particular parts of the chip, specifically, GPU.lemurbutton - Tuesday, September 19, 2023 - link

A17 Pro just beat all Intel CPUs except the 13900KS in ST Geekbench6. A17 Pro uses less than 3w to achieve this - with typical load significantly below 3w. Meanwhile, 13900KS uses as much as 250w or more.Intel's Meteor Lake needs to improve by 10x over Raptor Lake just to match what M3 will be able to do.

Irish_adam - Tuesday, September 19, 2023 - link

The 13900ks uses 250 watts on a single core? Got a link for that?I'll think you find that single core workloads use far, far less. Also remember that benchmarks across ISA's are sketch at best and outright made up at worst. I mean just look how badly games or software can be when ported from one ISA to another, it all really comes down to how well you've made the software to run on each architecture.

Makaveli - Tuesday, September 19, 2023 - link

He is an apple fanboySource: Trust me bro!

FWhitTrampoline - Tuesday, September 19, 2023 - link

No the A17 Performance core is only clocked at 3.6/3.7GHz compared to the x86 designs that are up to clocked 2Ghz+ higher. So this is not some ESPN like Fanatic statement as since the A14/Firestorm core Apple's instruction decoder width is at least 8 decoders wide and backed up by loads of execution ports. And so Apple's P cores are of a very wide order superscalar design since the A14/Firestorm was released!And the Apple P cores are high IPC at low clocks compared to the x86 designs that have 4/6 instruction decoders so need the higher clocks to make up the IPC deficiency for single thread performance that's calculated as IPC multiplied by average sustained clocks to get that single threaded performance metric.

The lower clocks are where Apple's power savings come from and the longer battery life is obtained. That and the A17 Pro/Earlier A series SOCs have loads of specialized heterogeneous compute for offloading workloads onto instead of using the CPU cores or GPU cores so more power can be saved there for all sorts of specialized workloads. The x86 processors/SOCs are just now getting the same sorts of specialized heterogeneous compute IP blocks but that's relatively immature compare to Apple's SOCs and other ARM Based SOC ecosystems that have been using that specialized heterogeneous compute IP for years now.

GeoffreyA - Thursday, September 21, 2023 - link

Well, it would be interesting to see Intel or AMD make a fixed-width ISA design and how that then stacks up against the stuff of Apple. Really, x86 is at a disadvantage because of the variable-width instructions but still has done a fantastic job. Or, I'd like to see Apple design an x86 CPU and see how that holds up against Zen and the rest.FWhitTrampoline - Thursday, September 21, 2023 - link

No logical reason for Apple to go CISC as the x86 Instruction Decoder requires many times the transistors to implement than the transistors required to implement a RISC ISA Instruction Decoder! So it was easy to get 8 Instruction Decoders to fit on the front of the A14/Firestorm processor core(RISC ISA Based). It's easier to go wider if one has a relatively fewer Instructions of a fixed length to implement in a Instruction Decoder design. So that makes it easy to produce a custom very wide order superscalar processor core design that targets high IPC at a lower clock rate and the SOC's CPU cores clocked well inside their Performance/Watt sweet spot. And to still have that A14 match/get close to the x86 cores in single threaded performance and against x86 core designs that are clocked 2GHz+ higher.The x86 ISA is too Legacy Instructions bloated and it's not going to be easy to refactor that and not require years in the process to do that. The ARM ISA ecosystem is from the ground up RISC there and even though the x86 designers have a RISC like back end to break those CISC down into more RISC like instructions, that hardware engine take more transistors to implement and thus will use more power resources getting that done. The vast majority of ARM ISA instructions translate 1 to 1 into single and some a few Micro-OPs so how hard is that to decode compared to x86 ISA instruction that mostly have multiple micro-ops generated to get all that complex work done. And there's a valid power usage reason that x86 never made any inroads into the wider tablet/smartphone market.

The thing about the ARM/RISC core designs is that they can scale from phones to server/HPC whereas the CISC designs can not scale down as low power as RISC designs! but Intel has done a good job at getting close there but a little too late to matter to the OEMs that really did not want to remain beholden to Intel and x86. And the same can be said now for RISC-V compared to an ARM Holdings that's maybe leaning more towards an x86 like business model where RISC-V represents total end user ISA freedom there, within reason, as the RISC-V ISA is totally open not royalist/encumberments required/enforced.