Arm Announces Neoverse N1 & E1 Platforms & CPUs: Enabling A Huge Jump In Infrastructure Performance

by Andrei Frumusanu on February 20, 2019 9:00 AM ESTPerformance Targets: What Are The Numbers?

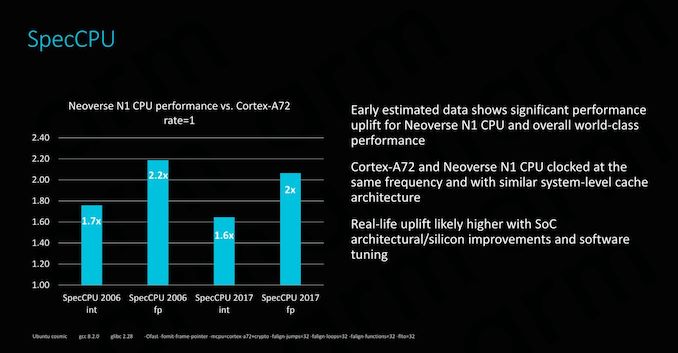

Naturally all this talk about performance and efficiency needs to substantiated with some concrete numbers. In the context of today’s announcement, most performance figures disclosed by Arm were relative improvements compared to the A72 Cosmos platform, which might not be the most relevant data-point in terms of trying to actually place the N1 in the competitive landscape, however we also have some more concrete absolute figures we’ll try to put some more context behind shortly.

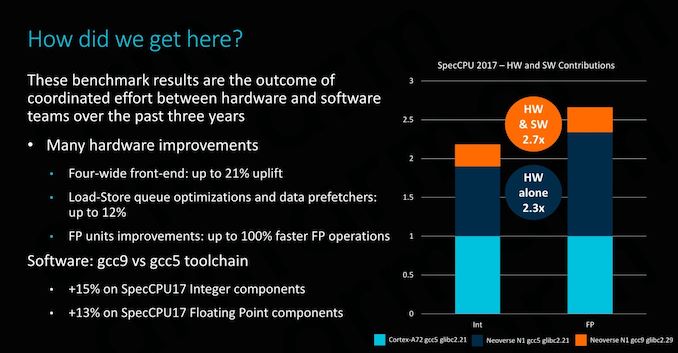

The comparison to the A72 at the same frequency as well as a similarly configured system with SLC configuration, the new N1 outright smashes its predecessor platform / microarchitecture. The figures here represent single-threaded performance in SPEC. In integer workloads we see PPC (performance per clock) and absolute performance gains from 60 to 70%. The floating point benchmarks are even more impressive with gains ranging from 100 to 120%. The data-points represent modelled and emulated performance estimates, the actual real-life performance improvements will higher due other SoC-level improvements as well as software improvements that aren’t available in existing actual A72 silicon products.

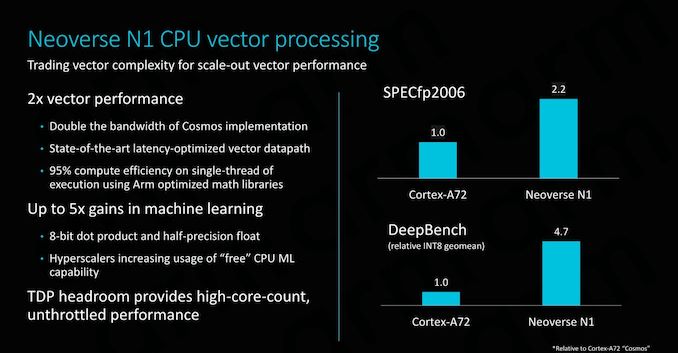

Arm again iterates the very large compute performance improvement compared to existing solutions, achieving beyond 2x performance boosts in vector workloads. Naturally, the N1’s ARMv8.2 ISA implementation also means that it supports 8-bit dot product as well as FP16 half-precision instructions which are particularly well fitted for machine-learning workloads, achieving performance boosts near 5x the predecessor platform.

Overall, Arm’s comparison to the A72 makes sense in the context that this is to its predecessor, however we have to keep in mind that the Cortex A72 was a core that was first introduced back in 2015 with first silicon products being released late that year as well as 2016, while the new Neoverse N1 in all likelihood isn’t something which we’ll be seeing in products for another 12-18 months, resulting in a ~3-4 year time span between the two products.

Arm did also divulge absolute SPEC numbers, and here we can have some more interesting analysis to competing platforms:

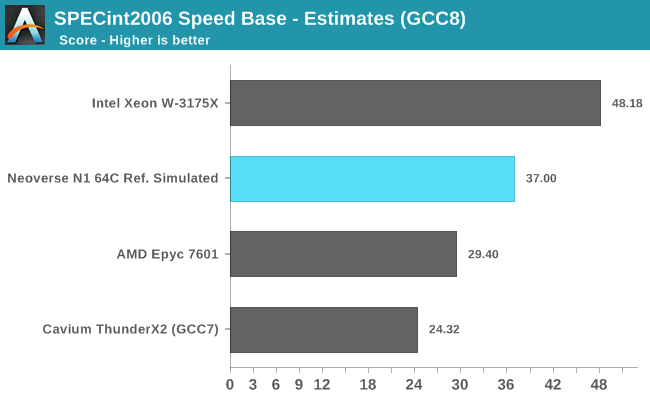

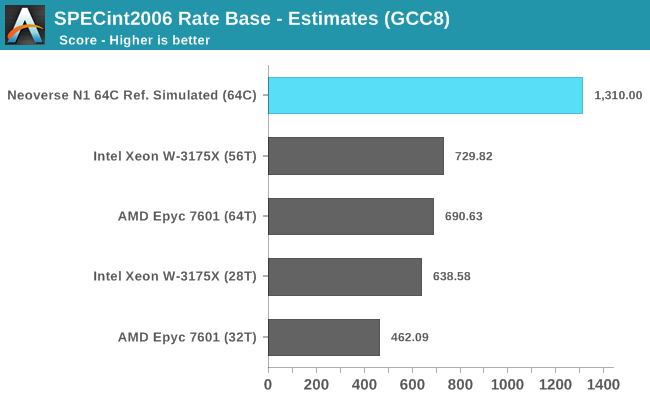

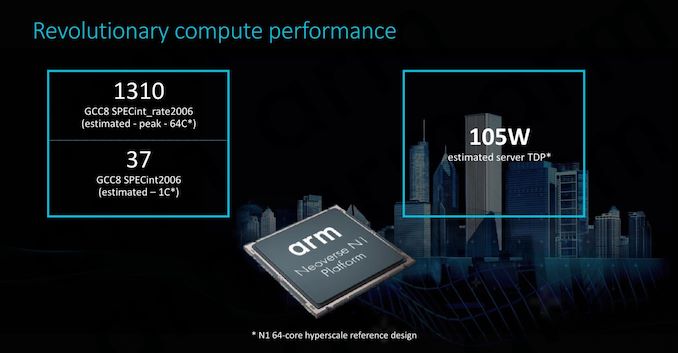

For a Neoverse N1 64-core hyperscale reference design running at about 2.6GHz, Arm proclaims single-threaded SPECint2006 Speed score of ~37 while reaching an estimated multi-threaded scores of 1310. The figures here are achieved in a quite low whole-server TDP of only 105W. The figures weren’t run actual silicon but rather estimated on Arm’s server farm in an emulation environment with RTL.

Arm made a big note that among the many efforts to improve performance for the Arm ecosystem isn’t only offering better hardware, but also better software. Over the last few years Arm has put a lot of effort into improving open-source tools and compilers, such as GCC. Comparing the latest GCC9 version to GCC5, we’re seeing improvements of 15-13% in integer and floating-point workloads. It’s to be noted that the optimisations made here are real-world use-case improvements, and not targeted changes that are meant to improve SPEC scores.

In order to put context around Arm’s numbers, I went ahead and compiled a set of binaries with GCC8 and had Ian run it on Intel’s and AMD’s latest and greatest, an Xeon W-3172X as well as a AMD Epyc 7601. It’s to be noted that the compiler flags weren’t exactly the same – both AnandTech’s and Arm’s builds were running under –Ofast, however Arm also added some minor flags which I hadn’t had the chance and time to cross-check, as well as enabling LTO. I’m not too concerned about the flag variations, however LTO will give Arm a 2-3% performance advantage over our internal numbers. It’s also to be noted that Arm’s single-threaded figures are marked as “Peak” scores, meaning each individual workload was run with the best performing compiler flags, while our internal figures are “Base” scores, meaning we’re running the same flags across all binaries and tests.

Edit: 25/02/2019: Arm have reached out to clarify that the performance scores were in fact Base runs and without LTO - the slides in question were mixing things up. Thus we have proper apples-to-apples comparisons in our numbers versus Arm's internal numbers.

As always we have to disclose that the below figures are merely internal estimates as they’re not official SPEC submissions. SPEC CPU 2006 has also been deprecated in favour of SPEC CPU 2017. Arm stated that they’ve shared SPEC CPU 2006 figures as that’s still the industry standard at the moment which gives users and customers the best context, and in the coming year or so they’ll switch over to also sharing SPEC CPU 2017 numbers. As for us at AnandTech, I’ve prepared SPEC CPU 2017 and Ian and I will be adopting it in our benchmark suites for PC/server CPUs as well as mobile SoCs in the coming weeks and months.

In terms of single-threaded performance, the N1 looks to be outright outstanding. With an estimated score of 37, it would beat the most recent and best-performing Arm server CPU, Cavium’s ThunderX2, by a significant margin. It’s to be noted that the real-world performance difference would be smaller than depicted in the above figures: GCC8 notably improved loop vectorisation in 456.hmmer which will give it a 1-2% overall score boost, and of course we have to take into account 2-3% difference due to Arm’s different compiler flags.

Intel’s W-3175X is hardly the most representative hyperscaler CPU, however it gives context as to what Intel’s top-end single-threaded performance in their best multi-core CPUs is. As a reminder, the W-3175X has a single-threaded boost clock of 4.5GHz, significantly above what we see in server SKUs such as the Xeon 8180. AMD’s Epyc 7601 is a more representative data-point against what a hyperscale design such as the N1 would compete against, again as a reminder this is a 3.2GHz single-threaded boost clock on the part of AMD’s first generation Zen core.

What surprised me the most about Arm’s quoted ST score of ~37 is that it’s significantly higher than what we measure on the Cortex A76, which scores in at about ~26 on actual hardware. Software and compiler considerations aside, one of the explanations for this huge 42% performance discrepancy could be the N1’s much better memory and cache system. Here the full system bandwidth is 6x higher than on mobile SoCs, and naturally in a single-threaded workload the thread would have full access to the Neoverse’s N1 64MB SLC cache, a whopping 16x bigger than the L3 in current mobile Cortex A76 designs. If the performance difference is indeed explained by the memory subsystem, it just gives to explain just how important it is to the performance scaling of a core.

Switching over to multi-threaded workloads represented by SPECrate2006, we have to note that this is a best-case scaling scenario for all platforms as there is no serialisation or inter-thread communication, as the test suite simply runs multiple processes in parallel. Even with this in mind, Arm’s projected results for a N1 64 core design are just outright impressive considering the fact that we’re talking about TDPs much smaller than any of AMD and Intel’s solutions, creating a performance and efficiency gap that I have a hard time seeing the x86 solutions being able to compete against.

We have to remember that we’re comparing a 64 core platform against AMD and Intel’s current 32/28 core platforms. A more fair comparison would be AMD’s upcoming Rome with 64 CPU cores, here even if AMD manages to outright double multi-threaded performance and match Arm’s projected MT numbers, I don’t see them be able to at the same time lower the TDP to match Arm’s estimated 105W target (The Epyc 7601 has a TDP of 180W, Rome details haven’t been announced yet).

SPEC’s Rate benchmarking scoring scales linearly with the instance count. In this case, if we divide Arm’s 1310 figure by the 64 cores of the system, we get a per-instance score of around ~20.5, which seems much more realistic and in-line with the Cortex A76 results we measure on current mobile devices.

Arm’s performance predictions for the Cortex A76 were quite spot on to what we measured on actual devices. We thus are more inclined to give Arm credence and the benefit of the doubt in regards to today’s projected Neoverse N1 scores. The figures do make sense, and are in line with what we saw the microarchitecture able to achieve in mobile.

Naturally we shouldn’t come to any conclusion until we actually have the actual hardware in our hands, but the presented figures are certainly promising if they can be realised by vendors implementing Neoverse N1 systems.

101 Comments

View All Comments

WinterCharm - Wednesday, February 20, 2019 - link

There's a gigantic Arm vs x86/64 battle brewing for the entire computer industry. ARM is just more efficient at every level, and if software is properly optimized it performs brilliantly.eva02langley - Wednesday, February 20, 2019 - link

However, it doesn't have the raw power required for many fields like scientific, compute and research. The core-count is also a huge factor in the upcoming future and unless you develop a chiplet approach, ARM is going to face the same issue of monolithic chips.The next chiplet evolution will require stacking. The future is way more related to modularity than the chip architecture. Don't get me wrong, the more advancement, the better for everyone, but I don't believe ARM is going to render x86 obsolete, hovwever I believe multi-chips SoC are going to render monolithic chip obsolete in the computer world.

SarahKerrigan - Wednesday, February 20, 2019 - link

Sure it does. There are ARM supercomputers, and this very article shows an N1 core outperforming Zen on single-thread, and both Zen and SKL-SP on throughput.HStewart - Wednesday, February 20, 2019 - link

I think you are forgetting the very nature of RISC (Arm) vs CISC (x86) architectures. By the nature of designed of RISC - reduce instruction set, it takes more instruction to execute same operation than CISC. For simple stuff RISC can likely do better but remember also modern x86 based CPU also break down more complex instructions in simpler instruction so it can run one multiple pipelines.SarahKerrigan - Wednesday, February 20, 2019 - link

Dude, I work in the semi industry, and I've designed pipelined cores. Saying "ARM's workload-demonstrated higher performance doesn't matter because x86 is CISC" is idiotic.SPEC isn't "simple stuff." It is a selection of extremely compute-intensive workstation loads, one that the whole industry - including Intel - uses to demonstrate comparative performance.

HStewart - Wednesday, February 20, 2019 - link

The biggest thing I found that seems misinformation is statement that these are estimates and this chip is simulated which tells me they don't need the real numbers.All I am saying is that CISC instructions can do more than RISC instructions per instruction, and it depends on compiler to take advantage of the those instructions. Please note I never sated it does not matter and that was in your words. I just mention considerations need to take in account of different architextures and the fact they are comparing future simulated designed to last year designs.

Andrei Frumusanu - Wednesday, February 20, 2019 - link

> All I am saying is that CISC instructions can do more than RISC instructions per instructionNobody cares. If the performance per clock is same or higher, you're just arguing about semantics.

Internally CISC processors break things down into RISC like µOps anyway.

ZolaIII - Wednesday, February 20, 2019 - link

@Andrei Frumusanu what would be estimated size of an A55 core with similar amount of cache as on represented E1 on 7nm lithography? I am very curious about that one. Also comparation to the A72 & A73 should be a good thing as ARM clames it reaches their level of performance. Its very interesting first born (SMT) and much needed one.zmatt - Wednesday, February 20, 2019 - link

When people talk about complex instructions they don't mean something like find the derivative of x^2. They mean something like a conditional move operation. The speed advantages on paper between RISC and CISC are in theory a wash. This is because while CISC can conceivably do more in an instruction, RISC can do more instructions per clock generally. In the real world the simplicity of RISC means usually, all other things being equal, the chips are simpler and can run higher clocks, draw less power and generate less heat for a given level of performance.x86 chips haven't actually been CISC since the mid 90's. Both Intel and AMD have been making chips that take the CISC instructions and run them through an instruction decoder that then hands RISC instructions to the actual cpu. Yes this does incur some overhead but it frees up cpu design quite a bit without being so closely tied to backwards compatibility.

The fact that modern x86 chips ultimately are actually executing code as reduced instruction sets shows you don't understand the concept.

Wilco1 - Wednesday, February 20, 2019 - link

x86 is still a CISC ISA irrespectively of how it executes instructions. Note that compilers predominantly use the simpler instructions, rather than the microcoded instructions and that's why it's possible for x86 to be fast at all.