Real-world virtualization benchmarking: the best server CPUs compared

by Johan De Gelas on May 21, 2009 3:00 AM EST- Posted in

- IT Computing

vApus Mark I: the choices we made

vApus mark I uses only Windows Guest OS VMs, but we are also preparing a mixed Linux and Windows scenario. vApus Mark I uses four VMs with four server applications:

- The Nieuws.be OLAP database, based on SQL Server 2008 x64 running on Windows 2008 64-bit, stress tested by our in-house developed vApus test.

- Two MCS eFMS portals running PHP, IIS on Windows 2003 R2, stress tested by our in house developed vApus test.

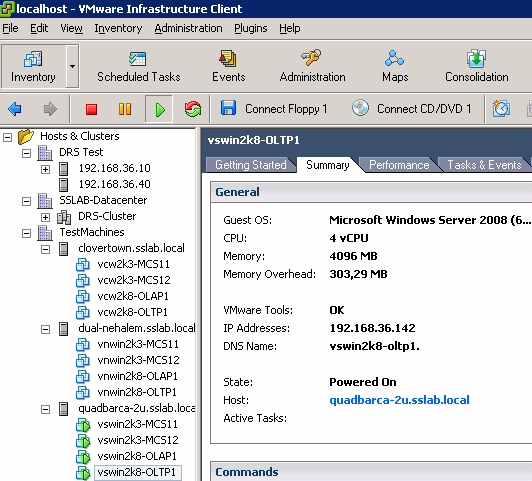

- One OLTP database, based on Oracle 10G Calling Circle benchmark of Dominic Giles.

We took great care to make sure that the benchmarks start, run under full load, and stop at the same moment. vApus is capable of breaking off a test when another is finished, or repeating a stress test until the others have finished.

The OLAP VM is based on the Microsoft SQL Server database of the Flemish/Dutch Nieuws.be site, one of the newest web 2.0 websites launched in 2008. Nieuws.be uses a 64-bit SQL Server 2008 x64 database on top of Windows 2008 Enterprise RTM (64-bit). It is a typical OLAP database, with more than 100GB of data consisting of a few hundred separate tables. 99% of the load on the database consists of selects, and about 5% of these are stored procedures. Network traffic is 6.5MB/s average and 14MB/s peak, so our Gigabit connection still has a lot of headroom. DQL (Disk Queue Length) is at 2.0 in the first round of tests, but we only record the results of the subsequent rounds where the database is in a steady state. We measured a DQL close to 0 during these tests, so there is no tangible impact from the storage system. The database is warmed up with 50 to 150 users. The results are recorded while 250 to 700 users hit the database.

The MCS eFMS portal, a real-world facility management web application, has been discussed in detail here. It is a complex IIS, PHP, and FastCGI site running on top of Windows 2003 R2 32-bit. Note that these two VMs run in a 32-bit guest OS, which impacts the VM monitor mode.

Since OLTP testing with our own flexible stress testing software is still in beta, our fourth VM uses a freely available test: "Calling Circle" of the Oracle Swingbench Suite. Swingbench is a free load generator designed by Dominic Giles to stress test an Oracle database. We tested the same way as we have tested before, with one difference: we use an OLTP database that is only 2.7GB large (instead of 9.5GB). We used a 9.5GB database to make sure that locking contention didn't kill scaling on systems with up to 16 logical CPUs. In this case, 2.7GB is enough as we deploy the database on a 4 vCPU VM. Keeping the database relatively small allows us to shrink the SGA size (Oracle buffer in RAM) to 3GB (normally it's 10GB) and the PGA size to 350MB (normally it's 1.6GB). Shrinking the database ensures that our VM is content with 4GB of RAM. Remember that we want to keep the amount of memory needed low so we can perform these tests without needing the most expensive RAM modules on the market. A calling circle test consists of 83% selects, 7% inserts, and 10% updates. The OLTP test runs on the Oracle 10g Release 2 (10.2) 64-bit on top of Windows 2008 Enterprise RTM (64-bit).

Below is a small table that gives you the "native" characteristics that matter for virtualization in each test. (Page management is still being researched.) With "native" we mean the characteristics measured running on the native OS (Windows 2003 and 2008 server) with perfmon.

| Native Performance Characteristics | ||||||

| Native Application / VM | Kernel Time | Typical CPU Load | Interrupt/s | Network | Disk I/O | DQL |

| Nieuws.be / VM1 | 0.65% | 90-100% | 3000 | 1.6MB/s | 0.9MB/s | 0.07 |

| MCS eFMS / VM2 & 3 | 8% | 50-100% | 4000 | 3MB/s | 0.01MB/s | 0 |

| Oracle Calling Circle / VM4 | 17% | 95-100% | 11900 | 1.6MB/s | 3.2MB/s | 0.07 |

Our OLAP database ("Nieuws.be") is clearly mostly CPU intensive and performs very little I/O besides a bit of network traffic. In contrast, the OLTP test causes an avalanche of interrupts. How much time an application spends in the native kernel gives a first rough indication of how much the hypervisor will have to work. It is not the only determining factor, as we have noticed that a lot of page activity is going on in the MCS eFMS application, which causes it to be even more "hypervisor intensive" than the OLTP VM. From the data we gathered, we suspect that the Nieuws.be VM will be mostly stressing the hypervisor by demanding "time slices" as the VM can absorb all the CPU power it gets. The same is true for the fourth "OLTP VM", but this one will also cause a lot of extra "world switches" (from the VM to hypervisor and back) due to the number of interrupts.

The two web portal VMs, which sometimes do not demand all available CPU power (4 cores per VM, 8 cores in total), will allow the hypervisor to make room for the other two VMs. However, the web portal (MCS eFMS) will give the hypervisor a lot of work if Hardware Assisted Paging (RVI, NPT, EPT) is not available. If EPT or RVI is available, the TLBs (Translation Lookaside Buffer) of the CPUs will be stressed quite a bit, and TLB misses will be costly.

As the SGA buffer is larger than the database, very little disk activity is measured. It helps of course that the storage system consist of two extremely fast X25-E SSDs. We only measure performance when all VMs are in a "steady" state; there is a warm up time of about 20 minutes before we actually start recording measurements.

66 Comments

View All Comments

binaryguru - Monday, June 1, 2009 - link

It seems to me, x86-based virutalization software is getting more and more complicated. Not only is x86 virtualization getting more complicated, it is getting more and more difficult to get reliable performance from it.Let me explain my point.

The industry is clearly trying to do more with less hardware these days. Getting raw VM performance on commodity hardware is getting to a point where there is no predictable way to plan for an efficient VM environment.

Current VM technology is trying to simulate the flexibility and performance of mainframes. To me, this is clearly an impossible goal to achieve with the current or future x86 platform model.

All of the problems the industry is experiencing with VM consolidation does not exist on the mainframe. Running 4 'large' VMs for 'raw' performance. How about running 40 'large' VMs for 'raw' performance. Clearly, we all know that is impossible to achieve with current VM setups.

Now I'm not saying that virtuallization is a bad idea, it clearly is the ONLY solution for the future of computing. However, I think that the industry is going about it the wrong way. Server farms are becoming increasingly more difficult to manage, never mind the challenge of getting 100s of blade servers to play nice with each other while providing good processing throughput.

This problem has been solved about 20 years ago; and yet, here we are, struggling again with the "how can I get MORE from my technology investment" scenario.

In conclusion, I think we need to go back to utilizing huge monolithic computing designs; not computing clusters.

mikidutzaa2 - Friday, May 29, 2009 - link

Hello,It would be useful (if possible) to have latency numbers/response times on the tests as well because rarely we are interested in throughput on our servers. What we usually care more is how long it takes the server to respond to user actions.

What is your opinion?

JohanAnandtech - Friday, May 29, 2009 - link

I agree. I admit it is easier for us or any benchmark person to use throughput as immediately comparable (X is 10% faster than Y) and you have only one datapoint. That is why almostResponsetime however can only be understood by drawing curves relative to the current throughtput / User concurrency. So yes, we are taking this excellent suggestion into consideration. The trade off might that articles get harder to read :-).

mikidutzaa2 - Friday, May 29, 2009 - link

Looking forward to your new articles then, glad to hear :).The articles don't necessarily have to be harder to read, you could put the detailed graphs on a separate page and maybe show only one response time for a "decent"/medium user concurrency.

Also, I would find interesting (if you have time) to have the same benchmarks with 2vcpu machines, I think this is a more common setup for virtualization. Very few people I think virtualize their most critical/highly used platforms - at least that's how we do it. We need virtualization for lightly used platforms (i.e. not very many users) but we are still very much interested in response time because the users perceive latency, not throughput.

So the important question is: if you have a virtual server (as opposed to a physical one) will the users notice? If so, by how much is it slower?

Thank you.

RobAm - Tuesday, May 26, 2009 - link

It's good to see some unbiased analysis with respect to virtualization. It's also especially interesting that your workloads (which look much more like real world apps my company runs as opposed to SPECjbb, vmark, vconsolidate) shows a much more competitive landscape than vmware and Intel portray. Also, doesn't vmware prohibit benchmarking without their permission. Did they give you permission? Has VMware called offering to re-educate you? :-)Brovane - Tuesday, May 26, 2009 - link

I was hoping for a some benchmarks on the Xeon x7xxx CPU for the Quad Socket Intel boxes. We are currently have Dell R900's and we where looking at adding to our ESX cluster. We where debating between the R900 with Hex cores our Xeon x55xx series CPU's in the R710. I see the x55xx series where bench marked but nothing on the Xeon MP series unless I am missing that part of the article.JohanAnandtech - Tuesday, May 26, 2009 - link

Expect a 24-core CPU comparison soon :-).Brovane - Tuesday, May 26, 2009 - link

You also might want to a 12-core comparison also. We have found that with a 4-socket box that you usually run out of memory before you run out CPU power. With the R900 having 32-Dimm Sockets, the R900's we purchased last year have 64GB of RAM and just use 2x2.93Ghz CPU's we max memory before CPU easily in our environment. Since Vmware licensing and Data Center licensing is done per Socket we only populate 2 of the sockets with CPU's and this seems to do great for us. You basically double your licensing costs if you go with all 4 sockets occupied. Just a thought as to how sometimes virtualization is done in the real world. There is such a price premium for 8GB memory Dimm's it isn't worth it to put 256GB in one box with all 4 sockets occupied. The 4GB Dimm's did reach price parity this year so we were looking at going for 128GB of memory on our new R900's however Intel also released Hex-core so we still don't see much reason to occupy all 4 sockets.yasbane - Tuesday, May 26, 2009 - link

I know positive feedback is always appreciated for the hard work put in but it seems very rare that we see any non-microsoft benchmarks for server stuff these days on Anandtech. Is there any particular reason for this...? I don't mean to carp but I recall the days when non-microsoft technologies actually got a mention on Anandtech. Sadly, we don't seem to see that anymore :(Cheers

JohanAnandtech - Tuesday, May 26, 2009 - link

Yasbane, my first server testing articles (DB2, MySQL) were all pure Linux benches. However, we have moved on to a new kind of realworld benchmarks and it takes a while to master the new benchmarks we have introduced. Running Calling Circle and Dell DVD store posed more problems on Linux than on Windows: we have lower performance, a few weird error messages and so on. In our lab, about 50% of the servers are running linux (and odd machines is running OS-X and another Solaris :-) and we definitely would love to see some serious linux benchmarking again. But it will take time.Xen benchmarks are happening as I write this BTW.