A Quick Look at OCZ's RevoDrive x2: IBIS Performance without HSDL

by Anand Lal Shimpi on November 4, 2010 1:05 AM EST- Posted in

- Storage

- SSDs

- OCZ

- RevoDrive

- RevoDrive x2

Random Read/Write Performance

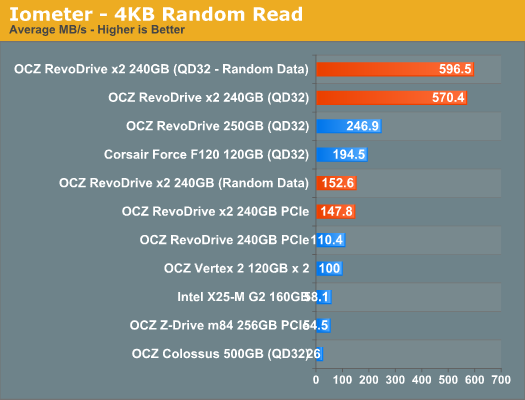

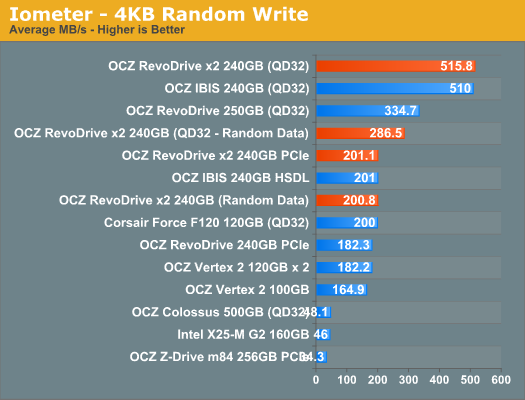

Our random tests use Iometer to sprinkle random reads/writes across an 8GB space of the entire drive for 3 minutes, somewhat approximating the random workload a high end desktop/workstation would see.

We present our default results at a queue depth of 3, as well as more stressful results at a queue depth of 32. The latter is necessary to really stress a four-way RAID 0 of SF-1200s, and also quite unrealistic for a desktop (more of a workstation/server workload at this point).

We also use Iometer's standard pseudo random data for each request as well as fully random data to show the min and max performance for SandForce based drives. The type of performance you'll see really depends on the nature of the data you're writing.

At best a single RevoDrive x2 (or four SF-1200 drives in RAID-0) can achieve over 500MB/s of 4KB random reads/writes. At worst? 286MB/s of random writes.

Sequential Read/Write Performance

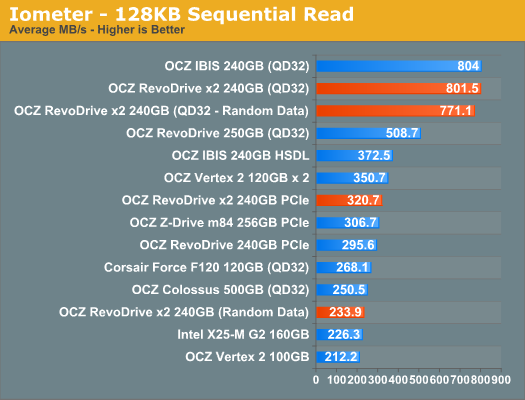

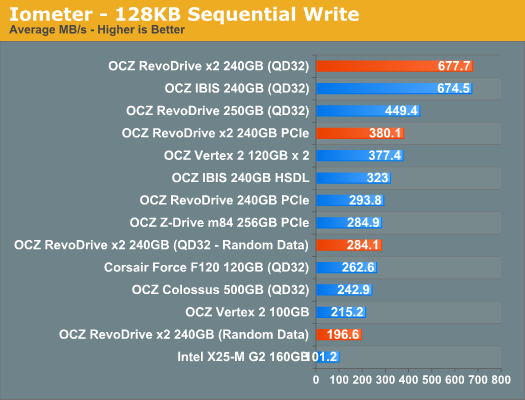

Our standard sequential tests write 128KB blocks to the drive, with a queue depth of 1, for 60 seconds straight. As was the case above, we present default as well as results with a 32 deep I/O queue. Pseudo random as well as fully random data is used to give us an idea of min and max performance.

The RevoDrive x2, like the IBIS, can read at up to 800MB/s. Write speed is an impressive 677MB/s. That's peak performance - worst case performance is down at 196MB/s for light workloads and 280MB/s for heavy ones. With SandForce so much of your performance is dependent on the type of data you're moving around. Highly compressible data won't find a better drive to live on, but data that's already in reduced form won't move around anywhere near as quickly.

46 Comments

View All Comments

jav6454 - Thursday, November 4, 2010 - link

800MB/s Sequential and almost 600MB/s random?! I now wonder where my piggy bank is?In all seriousness, OCZ has got a winner here, the only thing I do regret is having few PCIe ports... hopefully the HD6900s series will help open up a port.

DanNeely - Saturday, November 6, 2010 - link

AFAIK A 32 deep IO Queue isn't something you're going to see outside a heavily loaded server. The 150/200 on random and 320/380 on sequential are more in line with what a typical end user will get.Out of Box Experience - Wednesday, November 17, 2010 - link

I think these Indilynx controllers might be faster than Sandforce for REAL workloads like COPY & PASTE under XP!I wish Anand would directly compare copy/paste speeds of both SATA and PCIe SSD's under XP as that IS the Number One Operating System for the forseeable future!

I think that how an SSD handles non-compressible data or data already on the drive are the most enlightening tests one could do to directly compare SSD Controllers under common workloads

Now, if OCZ could just make their stuff plug and play under XP without all the endless tweaks, or OS upgrades, we'd have a winner untill Intel starts making PCIe SSD's

Chant in unison....

Plug & Play Plug & Play Plug & Play Plug & Play Plug & Play

mr woodstock - Friday, November 19, 2010 - link

XP is still number one .... for now.Windows 7 is selling very fast, and people are upgrading constantly.

Within 3-4 years XP will be all but a memory.

boe - Thursday, November 18, 2010 - link

I agree about PCIe ports. I could swing one x4 or faster PCIe port however since I need about 2TB of storage I'll be needing a lot more slots!mianmian - Thursday, November 4, 2010 - link

Using such a small connector to mount the daughter card seems not that reliable. I looks going to fall apart someday.puplan - Thursday, November 4, 2010 - link

There is nothing wrong with the connector. The daughter board is held by 4 screws.GeorgeH - Thursday, November 4, 2010 - link

I don't see any real reason to doubt that 4x Vertex 2s would perform identically, especially with a discrete RAID card of reasonable quality, but has it actually been verified on the integrated RAID that comes with a "performance mainstream motherboard", both AMD and Intel?It wouldn't be incredibly surprising to me to see some previously unknown performance reducing bugs crop up when you start pushing the kinds of numbers we're seeing here with integrated RAID solutions.

Minion4Hire - Thursday, November 4, 2010 - link

Yea, I thought that the max combined bandwidth from ICH10 was something like 660MB/s...?disappointed1 - Thursday, November 4, 2010 - link

"ICH10 implements the 10Gbit/s bidirectional DMI interface to the "northbridge" device."That's 1.25GB/s bidirectional