The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTFeeding the Beast

When frequency was all that mattered for CPUs, the main problem became efficiency, thermal performance, and yields: the higher the frequency was pushed, the more voltage needed, the further outside the peak efficiency window the CPU was, and the more power it consumed per unit work. For the CPU that was to sit at the top of the product stack as the performance halo part, it didn’t particularly matter – until the chip hit 90C+ on a regular basis.

Now with the Core Wars, the challenges are different. When there was only one core, making data available to that core through caches and DRAM was a relatively easy task. With 6, 8, 10, 12 and 16 cores, a major bottleneck suddenly becomes the ability to make sure each core has enough data to work continuously, rather than waiting at idle for data to get through. This is not an easy task: each processor now needs a fast way of communicating to each other core, and to the main memory. This is known within the industry as feeding the beast.

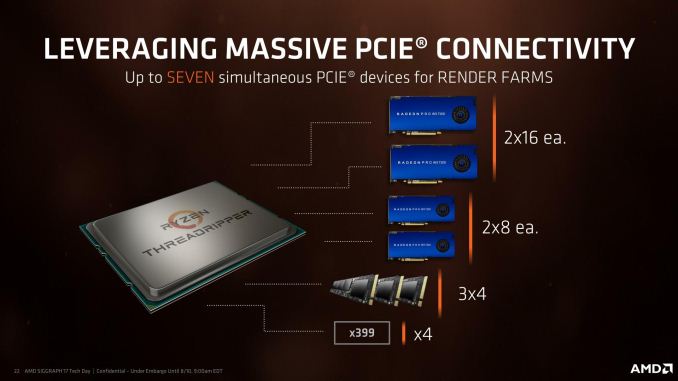

Top Trumps: 60 PCIe Lanes vs 44 PCIe lanes

After playing the underdog for so long, AMD has been pushing the specifications of its new processors as one of the big selling points (among others). Whereas Ryzen 7 only had 16 PCIe lanes, competing in part against CPUs from Intel that had 28/44 PCIe lanes, Threadripper will have access to 60 lanes for PCIe add-in cards. In some places this might be referred to as 64 lanes, however four of those lanes are reserved for the X399 chipset. At $799 and $999, this competes against the 44 PCIe lanes on Intel’s Core i9-7900X at $999.

The goal of having so many PCIe lanes is to support the sort of market these processors are addressing: high-performance prosumers. These are users that run multiple GPUs, multiple PCIe storage devices, need high-end networking, high-end storage, and as many other features as you can fit through PCIe. The end result is that we are likely to see motherboards earmark 32 or 48 of these lanes for PCIe slots (x16/x16, x8/x8/x8/x8, x16/x16/x16, x16/x8/x16/x8), followed by a two or three for PCIe 3.0 x4 storage via U.2 drives or M.2 drives, then faster Ethernet (5 Gbit, 10 Gbit). AMD allows each of the PCIe root complexes on the CPU, which are x16 each, to be bifurcated down to x1 as needed, for a maximum of 7 devices. The 4 PCIe lanes going to the chipset will also support several PCIe 3.0 and PCIe 2.0 lanes for SATA or USB controllers.

Intel’s strategy is different, allowing 44 lanes into x16/x16/x8 (40 lanes) or x16/x8/x16/x8 (40 lanes) or x16/x16 to x8/x8/x8x8 (32 lanes) with 4-12 lanes left over for PCIe storage or faster Ethernet controllers or Thunderbolt 3. The Skylake-X chipset then has an additional 24 PCIe lanes for SATA controllers, gigabit Ethernet controllers, SATA controllers and USB controllers.

Top Trumps: DRAM and ECC

One of Intel’s common product segmentations is that if a customer wants a high core count processor with ECC memory, they have to buy a Xeon. Typically Xeons will support a fixed memory speed depending on the number of channels populated (1 DIMM per channel at DDR4-2666, 2 DIMMs per channel at DDR4-2400), as well as ECC and RDIMM technologies. However, the consumer HEDT platforms for Broadwell-E and Skylake-X will not support these and use UDIMM Non-ECC only.

AMD is supporting ECC on their Threadripper processors, giving customers sixteen cores with ECC. However, these have to be UDIMMs only, but do support DRAM overclocking in order to boost the speed of the internal Infinity Fabric. AMD has officially stated that the Threadripper CPUs can support up to 1 TB of DRAM, although on close inspection it requires 128GB UDIMMs, which max out at 16GB currently. Intel currently lists a 128GB limit for Skylake-X, based on 16GB UDIMMs.

Both processors run quad-channel memory at DDR4-2666 (1DPC) and DDR4-2400 (2DPC).

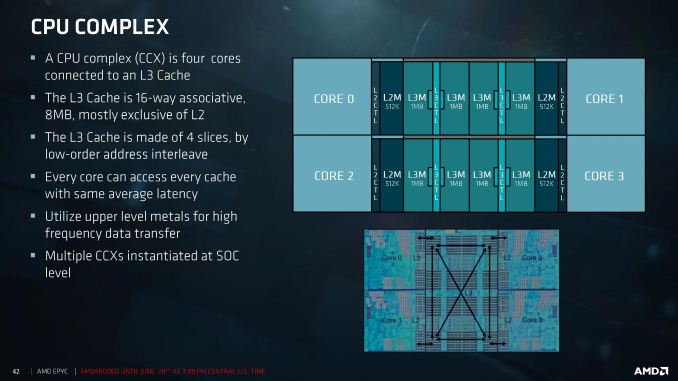

Top Trumps: Cache

Both AMD and Intel use private L2 caches for each core, then have a victim L3 cache before leading to main memory. A victim cache is a cache that obtains data when it is evicted from the cache underneath it, and cannot pre-fetch data. But the size of those caches and how AMD/Intel has the cores interact with them is different.

AMD uses 512 KB of L2 cache per core, leading to an 8 MB of L3 victim cache per core complex of four cores. In a 16-core Threadripper, there are four core complexes, leading to a total of 32 MB of L3 cache, however each core can only access the data found in its local L3. In order to access the L3 of a different complex, this requires additional time and snooping. As a result there can be different latencies based on where the data is in other L3 caches compared to a local cache.

Intel’s Skylake-X uses 1MB of L2 cache per core, leading to a higher hit-rate in the L2, and uses 1.375MB of L3 victim cache per core. This L3 cache has associated tags and the mesh topology used to communicate between the cores means that like AMD there is still time and latency associated with snooping other caches, however the latency is somewhat homogenized by the design. Nonetheless, this is different to the Broadwell-E cache structure, that had 256 KB of L2 and 2.5 MB of L3 per core, both inclusive caches.

347 Comments

View All Comments

mapesdhs - Thursday, August 10, 2017 - link

And at least the non-gaming tests were presented first.Chad - Thursday, August 10, 2017 - link

I think a simple comment before the gaming test suite like..."We show gaming tests for (the reasons you list above) but if you are looking at buying Threadripper for gaming alone, you are really missing the point of it." would go a long way to allaying concerns. You could cap it with what it would do well: Threadripper can really excel at running multiple VM's, servers, compiling, encoding etc and at the same time running a game while waiting. Or some such.

That's what appears to be missing to me, instead of just dumping tons of gaming results, putting it all into context of the strength of the processor. Just my 2 coppers

mapesdhs - Friday, August 11, 2017 - link

A comment like that may have helped prevent criticism, but if included it would also add weight to the suggestion that the review should have included a greater proportion of threaded workloads.pm9819 - Friday, August 18, 2017 - link

No one spending a $1000 on a cpu is going to be swayed by it's gaming performance. That comment isn't needed.Notmyusualid - Saturday, August 12, 2017 - link

@ Ian CutressI am here for the gaming results, so I thank you for running the benchies.

I think the problem is that fan-bois expected TR to do better than it had in those tests, and well, it didn't.

I for one, think you are reporting honestly, for what its worth.

Aristechnica, on the other hand...

Mugur - Sunday, August 13, 2017 - link

If you're here for the games, maybe the 7700k review is waiting for you...Notmyusualid - Sunday, August 13, 2017 - link

4 x 1070s in my main rig.A quad core woudn't suffice.

Chad - Sunday, August 13, 2017 - link

wow, if you are here for only gaming results w/ the threadripper, you are completely missing the point of it. just, wowGreenMeters - Thursday, August 10, 2017 - link

If it's priced in the existing traditional desktop segment, it's a traditional desktop part. If it's priced in the existing HEDT segment, it's an HEDT part.mapesdhs - Thursday, August 10, 2017 - link

That suggest that somehow there are such things as "traditional" price points, whereas in reality Intel (without competition) has been moving these all over the place (mostly up) for many years. How can such tech have traditional anything when its base nature is evolving so fast? Look at what Intel has done to its own pricing as a result of Ryzen, and now TR, implementing a major price drop at the 10c level compared to BW-E (Intel's Ark shows the 7900X being 42% cheaper after a gap of just one year).When disruptive competition occurs, there's no such thing as traditional. To me, traditional is another way of disguising tech stagnation.