AMD Confirms That SmartShift Tech Only Shipping in One Laptop For 2020

by Ryan Smith on June 5, 2020 5:00 PM EST

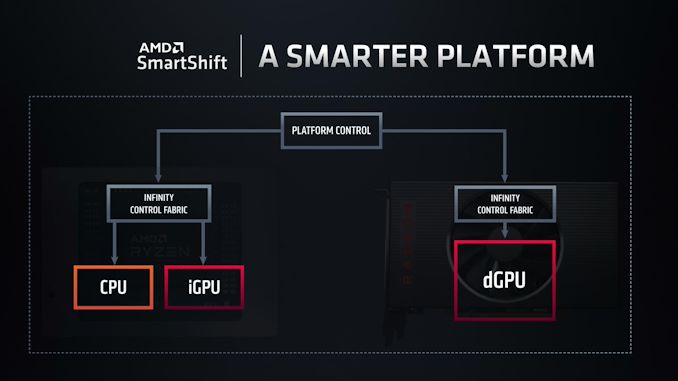

Launched earlier this year, AMD’s Ryzen 4000 “Renoir” APUs brought several new features and technologies to the table for AMD. Along with numerous changes to improve the APU’s power efficiency and reduce overall idle power usage, AMD also added an interesting TDP management feature that they call SmartShift. Designed for use in systems containing both an AMD APU and an AMD discrete GPU, SmartShift allows for the TDP budgets of the two processors to be shared and dynamically reallocated, depending on the needs of the workload.

As SmartShift is a platform-level feature that relies upon several aspects of a system, from processor choice to the layout of the cooling system, it is a feature that OEMs have to specifically plan for and build into their designs. Meaning that even if a laptop uses all AMD processors, it doesn’t guarantee that the laptop has the means to support SmartShift. As a result, only a single laptop has been released so far with SmartShift support, and that’s Dell’s G5 15 SE gaming laptop.

Now, as it turns out, Dell’s laptop will be the only laptop released this year with SmartShift support.

In a comment posted on Twitter and relating to an interview given to PCWorld’s The Full Nerd podcast, AMD’s Chief Architect of Gaming Solutions (and Dell alumni) Frank Azor has confirmed that the G5 15 SE is the only laptop set to be released this year with SmartShift support. According to the gaming frontman, the roughly year-long development cycle for laptops combined with SmartShift’s technical requirements meant that vendors needed to plan for SmartShift support early-on. And Dell, in turn, ended up being the first OEM to jump on the technology, leading to them being the first laptop vendor to release a SmartShift-enabled laptop.

It's a brand new technology and to @dell credit they jumped on it first. I explained reasons why during my interview with @pcworld @Gordonung @BradChacos No more SmartShift laptops are coming this year but the team is working hard on having more options ASAP for 2021.

— Frank Azor (@AzorFrank) June 4, 2020

Azor’s comment further goes on to confirm that AMD is working to get more SmartShift-enabled laptops on the market in 2021; there just won’t be any additional laptops this year. Which leaves us in an interesting situation where, Dell, normally one of AMD's more elusive partners, has what's essentially a de facto exclusive on the tech for 2020.

Source: Twitter

54 Comments

View All Comments

Dragonstongue - Friday, June 5, 2020 - link

be nice of their (GPU in general) would detect the program and adjust power with performance for overall power use, something that is far from ideal at this moment in time.. Imagine how much worldwide power would be saved (as well as wallets) if a high performance GPU would downclock from say 1800Mhz to 500Mhz (or less) yet still have the full performance expected.. granted this would only work with older games/appsexample Diablo 3, no way in heck if you used todays mid to high end GPU it NEEDS to run full clocks, that wastes a bunch of power for nothing (not to mention, produces lots of waste heat)

on that note, would be excellent if we the user could easily set the low 2d (idle basically) 3d and high 3d clocks overall, where the person whom likes to tinker is able to with a few clicks do such things.

That smartshift, sounds exactly what PS5 will be doing.. odd this has not been done for years now, things like Optimus on paper are great, real world has been not exactly great.

hope works out for them overall, maybe will lead to even better things down the line for PC, Laptop, Mobile and so forth, as the many makers seem overall against having a very nice size battery (certainly against top notch cooling regardless of price) at least this in theory will save quite a bit of power overall being "intelligent" allocation of power budget to keep performance up but actual power used as low as possible as often as possible .. to me, that is what it should be.

you know, to save them 4 cell batteries and all that LOL

Phartindust - Friday, June 5, 2020 - link

Have you tried Radeon Chill? You are able to set max frame rate with it which reduces power usage as you suggest.yeeeeman - Saturday, June 6, 2020 - link

That is not the point here. The idea is to maximize frame rates by shifting the power to the unit it needs it more. Say a game uses the CPU a lot, but the you not so much and because the default split of power is more to the GPU and less to the CPU, you will get bad performance. But this tech can detect this scenario and shift the power balance to cpu. You get better perf and the power usage is the sameyeeeeman - Saturday, June 6, 2020 - link

But the CPU not so much*deil - Monday, June 8, 2020 - link

Radeon Chill feature is great thing for gaming laptop. I set 30 fps and have 50'C on both or I can switch to 45 fps and have comfortable ~60.Problem with laptop is that 130 FPS that my laptop can do in games I play, comes with hefty price of literally burning fingers. (I have dell g5 older setup)

but I think what Dragonstongue meant that you can get 60 fps in 2 ways.

Craning up the horsepower of gpu to max, and then use utilize 30%

or

stay in second/third tier out of 5 (like my nvidia have 5 step boost ladder) of boost table and utilize 60-70% of gpu, while using 70% of the power envelope.

the idea is that staying boosted very high for long time is still wasting power even if you idle.

and If I've seen my GPU then it jumps only between 1/5/1/5/1/5 and usage is like 15w/40w/16w/42w

nothing in between.

whatthe123 - Saturday, June 6, 2020 - link

Real world performance is generally poor for things like this because what you might consider "acceptable" speed would be considered unacceptable for another user. It's not exactly reasonable to expect a hardware company to tweak boost clock performance for every single game imaginable at a specific target framerate for every GPU spec. Best case you have a framerate cap and the software predicts frequencies required to hit that target, but even then power savings will be marginal as hardware utilization still plays the biggest role by far compared to clockspeed.What you're describing isn't what the PS5 is doing either. The PS5 has a limited power envelope (probably 100-150w) and will be shifting the power cap and boost clocks of the CPU and GPU on the fly. They're not planning on having significant downclocks to reduce the power envelope. They claim their biggest savings will be from being able to maintain a suspend state at .5w

alufan - Sunday, June 7, 2020 - link

I think most modern GPUs from either side do that if I Play an older game such as UT04 even at max everything the fans on my AMD aircooled GPUs dont even come on as the GPU is not working enough to raise the temp above 48degc my liquid cooled pcs dont even rise more than a deg or so no matter how long it plays and I have both brands doing the same even an aircooled living room pc in an itx case with a Ryzen 3600 and a 5700 stays silentDillholeMcRib - Monday, June 8, 2020 - link

Do you know what punctuation is?zodiacfml - Sunday, June 7, 2020 - link

You don't know what you're talking about. To help you a bit, GPUs don't use all the graphics power when it is not required, like a framerate cap or vsync when the system reaches it. I play Diablo 3 in 4K with a Vega 56 at 60Hz display, shows using 70-80 watts. Witcher 3, GPU uses all the power it can.alufan - Sunday, June 7, 2020 - link

hmmm so you just repeated what I said?And I dont know what am talking about numpty