Kal-El Has Five Cores, Not Four: NVIDIA Reveals the Companion Core

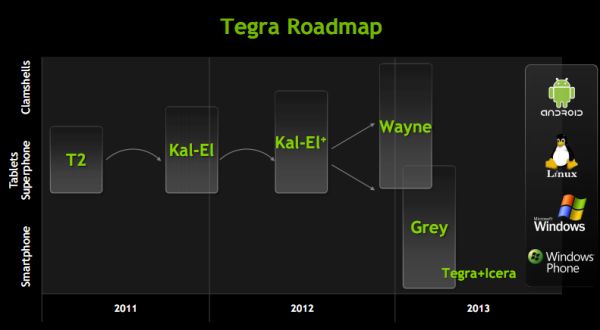

by Anand Lal Shimpi on September 20, 2011 11:46 AM ESTLast week NVIDIA provided an update on its Tegra SoC roadmap. Kal-El, its third generation SoC (likely to launch as Tegra 3) has been delayed by a couple of months. NVIDIA originally expected the first Kal-El tablets would arrive in August, but now it's looking like sometime in Q4. Kal-El's successor, Wayne, has also been pushed back until late 2012/early 2013. In between these two SoCs is a new part dubbed Kal-El+. It's unclear if Kal-El+ will be a process shrink or just higher clocks/larger die on 40nm.

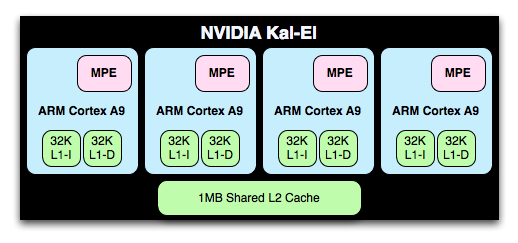

In the smartphone spirit, NVIDIA is letting small tidbits of information out about Kal-El as it gets closer to launch. In February we learned Kal-El would be NVIDIA's first quad-core SoC design, featuring four ARM Cortex A9s (with MPE) behind a 1MB shared L2 cache. Kal-El's GPU would also see a boost to 12 "cores" (up from 8 in Tegra 2), but through architectural improvements would deliver up to 3x the GPU performance of T2. Unfortunately the increase in GPU size and CPU core count doesn't come with a wider memory bus. Kal-El is still stuck with a single 32-bit LPDDR2 memory interface, although max supported data rate increases to 800MHz.

We also learned that NVIDIA was targeting somewhere around an 80mm^2 die, more than 60% bigger than Tegra 2 but over 30% smaller than the A5 in Apple's iPad 2. NVIDIA told us that although the iPad 2 made it easier for it to sell a big SoC to OEMs, it's still not all that easy to convince manufacturers to spend more on a big SoC.

Clock speeds are up in the air but NVIDIA is expecting Kal-El to run faster than Tegra 2. Based on competing A9 designs, I'd expect Kal-El to launch somewhere around 1.3 - 1.4GHz.

Now for the new information. Power consumption was a major concern with the move to Kal-El but NVIDIA addressed that by allowing each A9 in the SoC to be power gated when idle. When a core is power gated it is effectively off, burning no dynamic power and leaking very little. Tegra 2 by comparison couldn't power gate individual cores, only the entire CPU island itself.

In lightly threaded situations where you aren't using all of Kal-El's cores, the idle ones should simply shut off (if NVIDIA has done its power management properly of course). Kal-El is built on the same 40nm process as Tegra 2, so when doing the same amount of work the quad-core chip shouldn't consume any more power.

Power gating idle cores allows Kal-El to increase frequency to remaining active cores resulting in turbo boost-like operation (e.g. 4-cores active at 1.2GHz or 2-cores at 1.5GHz, these are hypothetical numbers of course). Again, NVIDIA isn't talking about final clocks for Kal-El or dynamic frequency ranges.

Five Cores, Not Four

Courtesy NVIDIA

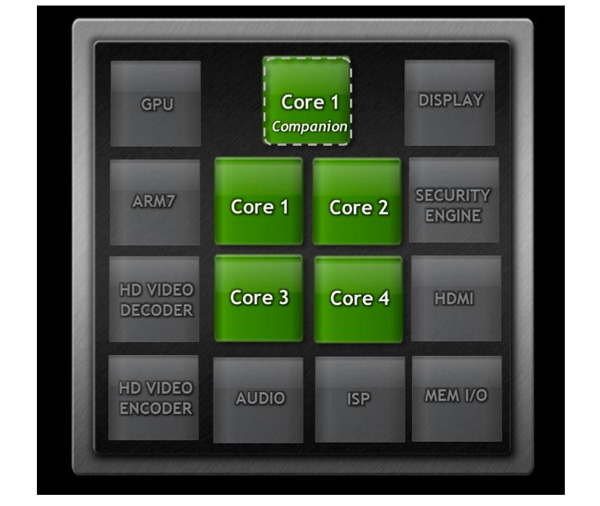

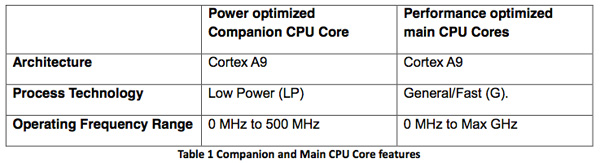

Finally we get to the big news. There are actually five ARM Cortex A9s with MPE on a single Kal-El die: four built using TSMC's 40nm general purpose (G) process and one on 40nm low power (LP). If you remember back to our Tegra 2 review you'll know that T2 was built using a similar combination of transistors; G for the CPU cores and LP for the GPU and everything else. LP transistors have very low leakage but can't run at super high frequencies, G transistors on the other hand are leaky but can switch very fast. Update: To clarify, TSMC offers a 40nm LPG process that allows for an island of G transistors in a sea of LP transistors. This is what NVIDIA appears to be using in Kal-El, and what NV used in Tegra 2 prior.

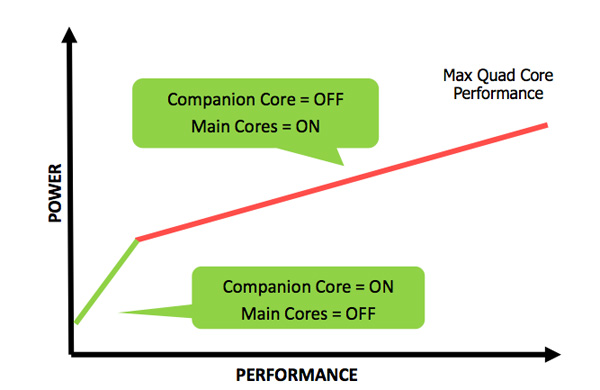

The five A9s can't all be active at once, you either get 1 - 4 of the GP cores or the lone LP core. The GP cores and the LP core are on separate power planes.

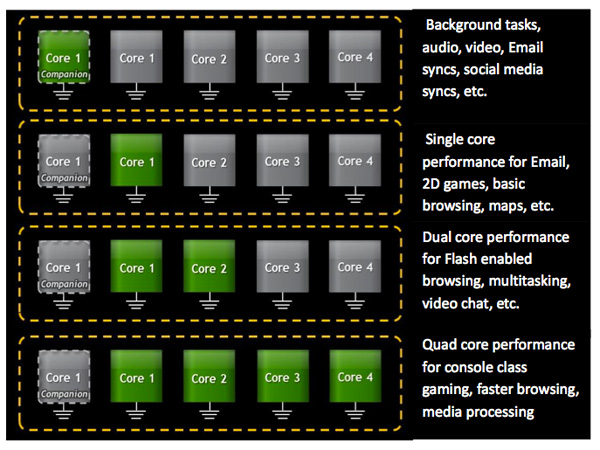

NVIDIA tells us that the sole point of the LP Cortex A9 is to provide lower power operation when your device is in active standby (e.g. screen is off but the device is actively downloading new emails, tweets, FB updates, etc... as they come in). The LP core runs at a lower voltage than the GP cores and can only clock at up to 500MHz. As long as the performance state requested by the OS/apps isn't higher than a predetermined threshold, the LP core will service those needs. Even with your display on it's possible for the LP core to be active, so long as the performance state requested by the OS/apps isn't too high.

Courtesy NVIDIA

Once it crosses that threshold however, the LP core is power gated and state is moved over to the array of GP cores. As I mentioned earlier, both CPU islands can't be active at the same time - you only get one or the other. All five cores share the same 1MB L2 cache so memory coherency shouldn't be difficult to work out.

Android isn't aware of the fifth core, it only sees up to 4 at any given time. NVIDIA accomplishes this by hotplugging the cores into the scheduler. The core OS doesn't have to be modified or aware of NVIDIA's 4+1 arrangement (which it calls vSMP). NVIDIA's CPU governor code defines the specific conditions that trigger activating cores. For example, under a certain level of CPU demand the scheduler will be told there's only a single core available (the companion core). As the workload increases, the governor will sleep the companion core and enable the first GP core. If the workload continues to increase, subsequent cores will be made available to the scheduler. Similarly if the workload decreases, the cores will be removed from the scheduling pool one by one.

Courtesy NVIDIA

NVIDIA can switch between the companion and main cores in under 2ms. There's also logic to prevent wasting time flip flopping between the LP and GP cores for workloads that reside on the trigger threshold.

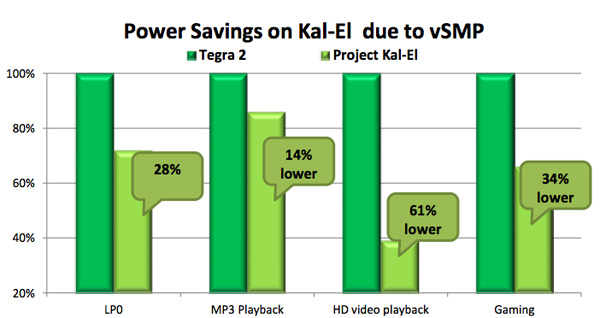

NVIDIA expects pretty much all active work to be done on the quad-core GP array, it's really only when your phone is idle and dealing with background tasks that the LP core will be in use. As a result of this process dichotomy NVIDIA is claiming significant power improvements over Tegra 2, despite an increase in transistor count:

Courtesy NVIDIA

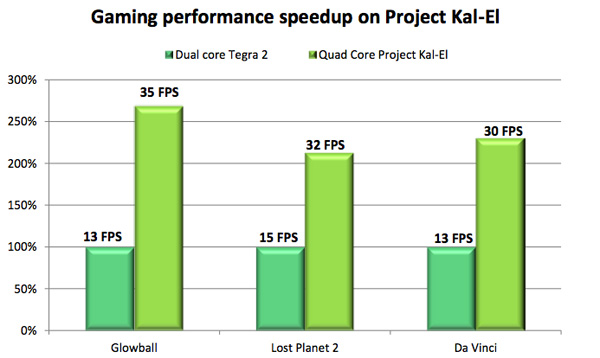

NVIDIA isn't talking about GPU performance today but it did reveal a few numbers in a new white paper:

Courtesy NVIDIA

We don't have access to the benchmarks here but everything was run on Android 3.2 at 1366 x 768 with identical game settings. The performance gains are what NVIDIA has been promising, in the 2 - 3x range. Obviously we didn't run any of these tests ourselves so approach with caution.

Final Words

What sold NVIDIA's Tegra 2 wasn't necessarily its architecture, but timing and the fact that it was Google's launch platform for Honeycomb. If the rumors are correct, NVIDIA isn't the launch partner for Ice Cream Sandwich, which means Kal-El has to stand on its own as a convincing platform.

Courtesy NVIDIA

The vSMP/companion core architecture is a unique solution to the problem of increasing SoC performance while improving battery life. This is a step towards heterogenous multiprocessing, despite the homogenous implementation in Kal-El. It remains to be seen how tangible is the companion core's impact on real world battery life.

74 Comments

View All Comments

zorxd - Tuesday, September 20, 2011 - link

It will barely catch-up with the Mali 400, if at all. I expected more from Nvidia.Draiko - Tuesday, September 20, 2011 - link

That has yet to be seen buuut let me ask you something...Can you even use the extra performance of the Mali on anything right now? That SGS2 you probably have is already 5 months old and nobody has done anything with it except root, throw Chainfire3D on it, and play Tegra games that nVidia helped bring to Android.

You have some more performance in benchmarks and extra video codec support. It's a newer SOC, that's expected but nobody seems to be doing anything with the added performance and Samsung isn't encouraging anyone to start. Sammy is just appeasing the ROM dev crowd whose main focus is messing around with Android, not building new apps and games.

If that doesn't change, SGS2 users are just going to be stuck jumping through a ton of hoops (rooting, chainfire3D, etc) to get the same software experience as the Tegra 2.

Thanks but no thanks.

metafor - Tuesday, September 20, 2011 - link

So essentially, you're arguing that Samsung needs to purposely fragment the Android market and use shady-ass "exclusivity" deals to gain consumers?Draiko - Tuesday, September 20, 2011 - link

Google, Apple, and Microsoft "Fragmented" the mobile device into separate platforms and app markets and shady-ass exclusivity deals to gain consumers. The same thing goes on in the gaming market as well. It happens everywhere. What's your point?nVidia ponied up some cash to bring some awesome games to Android. I don't see the problem with them "fragmenting" it a little to fund showcasing these capabilities of the Android Platform and their hardware. They're giving devs incentive to produce more advanced software and raising the bar.

I wish more companies would do that instead of putting out some kind of benchmark-rocking hardware that ends up doing nothing. Remember the Samsung hummingbird? They really put that to good use, didn't they?

metafor - Tuesday, September 20, 2011 - link

My point is that it's not a good thing. Just because "others do it too" doesn't make it a good thing.The fact that you can defend this shows just how much of a shill you are. Seriously, you're trying to argue that it's a good thing that each manufacturer comes up with exclusive game titles so that we'll have 5+ different markets and games available only to certain devices.

Having a common platform is what keeps hardware companies in constant competition with each other. Having "exclusivity" deals essentially boils down to "our hardware is not as good so we'll buy our way into consumer's pockets with some exclusive games".

Draiko - Wednesday, September 21, 2011 - link

First off, I'm not a shill.Second, Having a common platform is nice but keeping that common platform and then going above and beyond it is even better. Not pushing the envelope fast enough makes the entire platform stand still.

Third, I'd rather a company offer their excellent hardware and "buy" their way into my pocket with specialized apps and games than hollow benchmarks and wasted potential.

Again, the Samsung Hummingbird... what has the common platform done with it? What made it worthwhile? Nothing. The SGX540 just collected dust and now the people that actually cared about having an SGX540 have moved onto more powerful SOCs.

SteelCity1981 - Wednesday, September 21, 2011 - link

what a troll.Lucian Armasu - Wednesday, September 21, 2011 - link

You should eliminate comments like these, Anand. And also the ones that degrade the conversations. I don't want Anandtech to become another Engadget, comment wise.metafor - Thursday, September 22, 2011 - link

Nothing? It played every game that was available at the time at faster framerates (and in many cases, was the only one to do it at acceptable frame rates).And I have a bit of a hard time taking you seriously when not more than a few months ago, you were the one on this very board hawking about how much faster Tegra 2 was *on benchmarks*.

Now your new schtick is "hey, benchmarks don't matter, Tegra has software exclusives!"

Never mind that as Chainfire proved, those exclusives have nothing to do with the technical capabilities of other chips like Exynos (it plays them just fine) but having to do with nVidia exercising Microsoft-like "deals".

Tell me, what are your thoughts on LG's new "exclusives" with game titles? So that in order to get them, you have to get an LG phone? But they, they're "incentivizing" devs, right?

ph00ny - Thursday, September 22, 2011 - link

Have you seen the difference between standard openGL code vs nvidia "optimized" code. It's like using 2 lines of code vs 1 line. End result is pretty much identical between two platforms. What do you think chainfire is doing with his app running as an intermediate driver? Yup. He's simply translating nvidia "optimized" codes back into OpenGL standard. What nvidia is doing here simply creating another level of fragmentation to the android environment.As for google, apple, and microsoft comment, they're the creators of indvidual OS. Are you saying nvidia should make their own OS to "fragment".